- Problem 1

Perform the Gram-Schmidt process on each of these bases

for  .

.

-

-

-

Then turn those orthogonal bases into orthonormal bases.

- Answer

-

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}1\\1\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}2\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}2\\1\end{pmatrix}})={\begin{pmatrix}2\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}2\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}{{\begin{pmatrix}1\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\begin{pmatrix}2\\1\end{pmatrix}}-{\frac {3}{2}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\begin{pmatrix}1/2\\-1/2\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/89d97e62ddbb1f3f7268d64c626986fc014e1ac0)

-

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}0\\1\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}-1\\3\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}-1\\3\end{pmatrix}})={\begin{pmatrix}-1\\3\end{pmatrix}}-{\frac {{\begin{pmatrix}-1\\3\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}{{\begin{pmatrix}0\\1\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\3\end{pmatrix}}-{\frac {3}{1}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\0\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/16ebec0b5e7357f508c7dec77b4dfc3068487290)

-

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}0\\1\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}-1\\0\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}-1\\0\end{pmatrix}})={\begin{pmatrix}-1\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}-1\\0\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}{{\begin{pmatrix}0\\1\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\0\end{pmatrix}}-{\frac {0}{1}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\0\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4b546f7f1a59998fd2dcfe1fbd07b387a58539ec)

The corresponding orthonormal bases for the three parts of this

question are these.

- This exercise is recommended for all readers.

- Problem 2

Perform the Gram-Schmidt process on each of these bases

for  .

.

-

-

Then turn those orthogonal bases into orthonormal bases.

- Answer

-

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}2\\2\\2\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}1\\0\\-1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}1\\0\\-1\end{pmatrix}})={\begin{pmatrix}1\\0\\-1\end{pmatrix}}-{\frac {{\begin{pmatrix}1\\0\\-1\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}{{\begin{pmatrix}2\\2\\2\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}={\begin{pmatrix}1\\0\\-1\end{pmatrix}}-{\frac {0}{12}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}={\begin{pmatrix}1\\0\\-1\end{pmatrix}}\\{\vec {\kappa }}_{3}&={\begin{pmatrix}0\\3\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}0\\3\\1\end{pmatrix}})-{\mbox{proj}}_{[{\vec {\kappa }}_{2}]}({\begin{pmatrix}0\\3\\1\end{pmatrix}})={\begin{pmatrix}0\\3\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}{{\begin{pmatrix}2\\2\\2\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}}{{\begin{pmatrix}1\\0\\-1\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}\\&\quad ={\begin{pmatrix}0\\3\\1\end{pmatrix}}-{\frac {8}{12}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}={\begin{pmatrix}-5/6\\5/3\\-5/6\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/533b3e46f7617f45173e26fbaddcd013a459a3d6)

-

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}1\\-1\\0\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}0\\1\\0\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}0\\1\\0\end{pmatrix}})={\begin{pmatrix}0\\1\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\-1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}={\begin{pmatrix}0\\1\\0\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}={\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}\\{\vec {\kappa }}_{3}&={\begin{pmatrix}2\\3\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}2\\3\\1\end{pmatrix}})-{\mbox{proj}}_{[{\vec {\kappa }}_{2}]}({\begin{pmatrix}2\\3\\1\end{pmatrix}})\\&={\begin{pmatrix}2\\3\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}2\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\-1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}2\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}}{{\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}\\&\quad ={\begin{pmatrix}2\\3\\1\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}-{\frac {5/2}{1/2}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}={\begin{pmatrix}0\\0\\1\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4d80cf98218710d605311cdc628c9f0bb63b3a54)

The corresponding orthonormal bases for the two parts of this

question are these.

- This exercise is recommended for all readers.

- Problem 3

Find an orthonormal basis for this subspace of  : the

plane

: the

plane  .

.

- Answer

The given space can be parametrized in this way.

So we take the basis

apply the Gram-Schmidt process

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}1\\1\\0\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}-1\\0\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}-1\\0\\1\end{pmatrix}})={\begin{pmatrix}-1\\0\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}-1\\0\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}={\begin{pmatrix}-1\\0\\1\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}={\begin{pmatrix}-1/2\\1/2\\1\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/74aaab48f0b91844cc6ed81b2e6e7f03e456abda)

and then normalize.

- Problem 4

Find an orthonormal basis for this subspace of  .

.

- Answer

Reducing the linear system

![{\displaystyle {\begin{array}{*{4}{rc}r}x&-&y&-&z&+&w&=&0\\x&&&+&z&&&=&0\end{array}}\;{\xrightarrow[{}]{-\rho _{1}+\rho _{2}}}\;{\begin{array}{*{4}{rc}r}x&-&y&-&z&+&w&=&0\\&&y&+&2z&-&w&=&0\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8ccdd18fc5d4c51274d12599d418fb0a3dc3a948)

and parametrizing gives this description of the subspace.

So we take the basis,

go through the Gram-Schmidt process

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}0\\1\\0\\1\end{pmatrix}})={\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}}{{\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}={\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}-{\frac {-2}{6}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}={\begin{pmatrix}-1/3\\1/3\\1/3\\1\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c7233578479e844f787f86beba76edc9cd3c273)

and finish by normalizing.

- Problem 5

Show that any linearly independent subset of  can be

orthogonalized without changing its span.

can be

orthogonalized without changing its span.

- Answer

A linearly independent subset of  is a basis for its

own span.

Apply Theorem 2.7.

is a basis for its

own span.

Apply Theorem 2.7.

Remark.

Here's why the phrase "linearly independent" is in the question.

Dropping the phrase would require us to worry about two things.

The first thing to worry about is that

when we do the Gram-Schmidt process on a linearly dependent

set then we get some zero vectors.

For instance, with

we would get this.

![{\displaystyle {\vec {\kappa }}_{1}={\begin{pmatrix}1\\2\end{pmatrix}}\qquad {\vec {\kappa }}_{2}={\begin{pmatrix}3\\6\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}3\\6\end{pmatrix}})={\begin{pmatrix}0\\0\end{pmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2417ad170c7e1941894f60f4f6d44ad4f5a9de4b)

This first thing is not so bad because the zero vector is by definition orthogonal to every other vector, so we could accept this situation as yielding an orthogonal set (although it of course can't be normalized), or we just could modify the Gram-Schmidt procedure to throw out any zero vectors. The second thing to worry about if we drop the phrase "linearly independent" from the question is that the set might be infinite. Of course, any subspace of the finite-dimensional  must also be finite-dimensional so only finitely many of its members are linearly independent, but nonetheless, a "process" that examines the vectors in an infinite set one at a time would at least require some more elaboration in this question. A linearly independent subset of

must also be finite-dimensional so only finitely many of its members are linearly independent, but nonetheless, a "process" that examines the vectors in an infinite set one at a time would at least require some more elaboration in this question. A linearly independent subset of  is automatically finite— in fact, of size

is automatically finite— in fact, of size  or less— so the "linearly independent" phrase obviates these concerns.

or less— so the "linearly independent" phrase obviates these concerns.

- This exercise is recommended for all readers.

- Problem 6

What happens if we apply the Gram-Schmidt process to

a basis that is already orthogonal?

- Answer

The process leaves the basis unchanged.

- Problem 7

Let  be a set of mutually orthogonal vectors in

be a set of mutually orthogonal vectors in  .

.

- Prove that for any

in the space, the vector

in the space, the vector

![{\displaystyle {\vec {v}}-({\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+\dots +{\mbox{proj}}_{[{\vec {v}}_{k}]}({{\vec {v}}\,}))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6f5c4fb926fb1bbd888113edd0218d76777ca04f) is orthogonal to each of

is orthogonal to each of  , ...,

, ...,  .

.

- Illustrate the prior item in

by using

by using  as

as

, using

, using  as

as  , and

taking

, and

taking  to have components

to have components  ,

,  , and

, and  .

.

- Show that

![{\displaystyle {\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+\dots +{\mbox{proj}}_{[{\vec {v}}_{k}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9b6b75d22323cf78e846ce3ce8f71e08d74d76c4) is the vector in the

span of the set of

is the vector in the

span of the set of  's that is closest to

's that is closest to  .

Hint. To the illustration done for the prior part,

add a vector

.

Hint. To the illustration done for the prior part,

add a vector  and apply the Pythagorean Theorem to the resulting triangle.

and apply the Pythagorean Theorem to the resulting triangle.

- Answer

- The argument is as in the

case of the proof

of Theorem 2.7.

The dot product

case of the proof

of Theorem 2.7.

The dot product

![{\displaystyle {\vec {\kappa }}_{i}\cdot \left({\vec {v}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})-\dots -{\mbox{proj}}_{[{\vec {v}}_{k}]}({{\vec {v}}\,})\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/41da96fb982d0b9d64ac9e2e97a1ff55c4e061e0)

can be written as the sum of terms of the form

![{\displaystyle -{\vec {\kappa }}_{i}\cdot {\mbox{proj}}_{[{\vec {\kappa }}_{j}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/77bedca8b82f85c98eec73ae6e2cc5fe4c672b39) with

with  ,

and the term

,

and the term

![{\displaystyle {\vec {\kappa }}_{i}\cdot ({\vec {v}}-{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {v}}\,}))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8513301846f08563f835824ebf14279dd2099a97) .

The first kind of term equals zero because the

.

The first kind of term equals zero because the  's

are mutually orthogonal.

The other term is zero because this projection is orthogonal

(that is, the projection definition makes it zero:

's

are mutually orthogonal.

The other term is zero because this projection is orthogonal

(that is, the projection definition makes it zero:

![{\displaystyle {\vec {\kappa }}_{i}\cdot ({\vec {v}}-{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {v}}\,}))={\vec {\kappa }}_{i}\cdot {\vec {v}}-{\vec {\kappa }}_{i}\cdot \left(({\vec {v}}\cdot {\vec {\kappa }}_{i})/({\vec {\kappa }}_{i}\cdot {\vec {\kappa }}_{i})\right)\cdot {\vec {\kappa }}_{i}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/76cfb27fa9f2c4004f022aed593698c64332993d) equals, after all of the cancellation is done, zero).

equals, after all of the cancellation is done, zero).

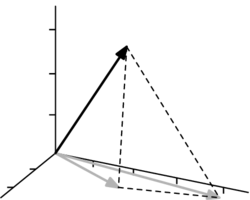

- The vector

is shown in black and the

vector

is shown in black and the

vector ![{\displaystyle {\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+{\mbox{proj}}_{[{\vec {v}}_{2}]}({{\vec {v}}\,})=1\cdot {\vec {e}}_{1}+2\cdot {\vec {e}}_{2}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c783e2fefc8971ebde02538b7e0d3eae89c5632) is in gray.

is in gray.

The vector

![{\displaystyle {\vec {v}}-({\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+{\mbox{proj}}_{[{\vec {v}}_{2}]}({{\vec {v}}\,}))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/78258dc89c65d0f5d28d5638ad855e1c6f40a203) lies on the dotted line connecting the black vector to the

gray one, that is, it is orthogonal to the

lies on the dotted line connecting the black vector to the

gray one, that is, it is orthogonal to the  -plane.

-plane.

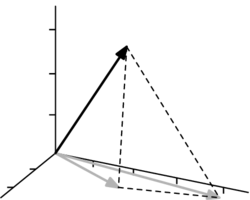

- This diagram is gotten by following the hint.

The dashed triangle has a right angle where

the gray vector  meets the vertical dashed line

meets the vertical dashed line

; this is what was

proved in the first item of this question.

The Pythagorean theorem then gives that the hypoteneuse— the

segment from

; this is what was

proved in the first item of this question.

The Pythagorean theorem then gives that the hypoteneuse— the

segment from  to any other vector— is longer than

the vertical dashed line.

to any other vector— is longer than

the vertical dashed line.

More formally, writing ![{\displaystyle {\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+\dots +{\mbox{proj}}_{[{\vec {v}}_{k}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9b6b75d22323cf78e846ce3ce8f71e08d74d76c4) as

as

,

consider any other vector in the span

,

consider any other vector in the span

.

Note that

.

Note that

and that

(because the first item shows the  is orthogonal to each

is orthogonal to each  and so it is orthogonal

to this linear combination of the

and so it is orthogonal

to this linear combination of the  's).

Now apply the Pythagorean Theorem (i.e., the Triangle Inequality).

's).

Now apply the Pythagorean Theorem (i.e., the Triangle Inequality).

- This exercise is recommended for all readers.

- Problem 9

One advantage of orthogonal bases is that they simplify finding the

representation of a vector with respect to that basis.

- For this vector and this non-orthogonal basis for

first represent the vector with respect to the basis.

Then project the vector onto the span of each basis vector

![{\displaystyle [{{\vec {\beta }}_{1}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/559f68d79158bda36daf673222e59c03278ace09) and

and ![{\displaystyle [{{\vec {\beta }}_{2}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/715e3540cc050343c92385c8b5cf4736c2633ff9) .

.

- With this orthogonal basis for

represent the same vector  with respect to the basis.

Then project the vector onto the span of each basis vector.

Note that the coefficients in the representation and the projection

are the same.

with respect to the basis.

Then project the vector onto the span of each basis vector.

Note that the coefficients in the representation and the projection

are the same.

- Let

be an orthogonal basis for some subspace of

be an orthogonal basis for some subspace of  .

Prove that for any

.

Prove that for any  in the subspace,

the

in the subspace,

the  -th component of the representation

-th component of the representation  is the scalar coefficient

is the scalar coefficient  from

from ![{\displaystyle {\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4750ad71c62bcd218538689eec0351b9990cef66) .

.

- Prove that

![{\displaystyle {\vec {v}}={\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+\dots +{\mbox{proj}}_{[{\vec {\kappa }}_{k}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1f14a7fab8047e6f3be3e5da8f2e419199ce045e) .

.

- Answer

- The representation can be done by eye.

The two projections are also easy.

![{\displaystyle {\mbox{proj}}_{[{\vec {\beta }}_{1}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}{{\begin{pmatrix}1\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\frac {5}{2}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}\qquad {\mbox{proj}}_{[{\vec {\beta }}_{2}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}={\frac {2}{1}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4aeaf75a1f57cdf79f56ac25932ada69565ecec2)

- As above, the representation can be done by eye

and the two projections are easy.

![{\displaystyle {\mbox{proj}}_{[{\vec {\beta }}_{1}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}{{\begin{pmatrix}1\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\frac {5}{2}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}\qquad {\mbox{proj}}_{[{\vec {\beta }}_{2}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}}{{\begin{pmatrix}1\\-1\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}={\frac {-1}{2}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2b19de0f4c5c12cf5d4263a9dc7623a5adb49463)

Note the recurrence of the  and the

and the  .

.

- Represent

with respect to the basis

with respect to the basis

so that

.

To determine

.

To determine  ,

take the dot product of both sides with

,

take the dot product of both sides with  .

.

Solving for  yields the desired coefficient.

yields the desired coefficient.

- This is a restatement of the prior item.

- Problem 10

Bessel's Inequality.

Consider these orthonormal sets

along with the vector  whose components are

whose components are

,

,  ,

,  , and

, and  .

.

-

Find the coefficient

for the projection of

for the projection of

onto the span of the vector in

onto the span of the vector in  .

Check that

.

Check that  .

.

-

Find the coefficients

and

and  for the projection of

for the projection of  onto the spans of the two vectors in

onto the spans of the two vectors in  .

Check that

.

Check that  .

.

- Find

,

,  , and

, and  associated with the vectors in

associated with the vectors in

, and

, and  ,

,  ,

,  , and

, and  for the vectors in

for the vectors in  .

Check that

.

Check that

and

that

and

that

.

.

Show that this holds in general: where

is an orthonormal set and

is an orthonormal set and  is coefficient of

the projection of a vector

is coefficient of

the projection of a vector  from the space

then

from the space

then

.

Hint. One way is to look at the inequality

.

Hint. One way is to look at the inequality

and expand the

and expand the  's.

's.

- Answer

First,  .

.

-

-

,

,

-

,

,  ,

,  ,

,

For the proof, we will do only the  case

because the completely general case is messier but no more enlightening.

We follow the hint

(recall that for any vector

case

because the completely general case is messier but no more enlightening.

We follow the hint

(recall that for any vector  we have

we have

).

).

(The two mixed terms in the third part of the third line are zero because  and

and  are orthogonal.) The result now follows on gathering like terms and on recognizing that

are orthogonal.) The result now follows on gathering like terms and on recognizing that  and

and  because these vectors are given as members of an orthonormal set.

because these vectors are given as members of an orthonormal set.

- Problem 11

Prove or disprove: every vector in  is in some orthogonal

basis.

is in some orthogonal

basis.

- Answer

It is true, except for the zero vector. Every vector in  except the zero vector is in a basis, and that basis can be orthogonalized.

except the zero vector is in a basis, and that basis can be orthogonalized.

- Problem 12

Show that the columns of an  matrix form an orthonormal

set if and only if the inverse of the matrix is its transpose.

Produce such a matrix.

matrix form an orthonormal

set if and only if the inverse of the matrix is its transpose.

Produce such a matrix.

- Answer

The  case gives the idea.

The set

case gives the idea.

The set

is orthonormal if and only if these nine conditions all hold

(the three conditions in the lower left are redundant but nonetheless

correct).

Those, in turn, hold if and only if

as required.

This is an example, the inverse of this matrix is its transpose.

- Problem 13

Does the proof of Theorem 2.2 fail to consider the

possibility that the set of vectors is empty (i.e., that  )?

)?

- Answer

If the set is empty then the summation on the left side is the linear combination of the empty set of vectors, which by definition adds to the zero vector. In the second sentence, there is not such  , so the "if ... then ..." implication is vacuously true.

, so the "if ... then ..." implication is vacuously true.

- Problem 14

Theorem 2.7 describes a change of basis

from any basis  to one that is orthogonal

to one that is orthogonal

.

Consider the change of basis matrix

.

Consider the change of basis matrix  .

.

- Prove that the matrix

changing bases in the direction opposite to that of the theorem

has an upper triangular shape— all

of its entries below the main diagonal are zeros.

changing bases in the direction opposite to that of the theorem

has an upper triangular shape— all

of its entries below the main diagonal are zeros.

- Prove that the inverse of an upper triangular matrix is

also upper triangular (if the matrix is invertible, that is).

This shows that the matrix

changing bases

in the direction described in the theorem is upper triangular.

changing bases

in the direction described in the theorem is upper triangular.

- Answer

- Part of the induction argument proving

Theorem 2.7 checks that

is in the span of

is in the span of

.

(The

.

(The  case in the proof illustrates.)

Thus, in the change of basis matrix

case in the proof illustrates.)

Thus, in the change of basis matrix  ,

the

,

the  -th column

-th column  has components

has components  through

through  that are zero.

that are zero.

- One way to see this is to recall the computational

procedure that we use to find the inverse.

We write the matrix, write the identity matrix next to

it, and then we do Gauss-Jordan reduction.

If the matrix starts out upper triangular then the Gauss-Jordan

reduction involves only the Jordan half and these steps,

when performed on the identity, will result in an upper triangular

inverse matrix.

- Problem 15

Complete the induction argument in the proof of

Theorem 2.7.

- Answer

For the inductive step, we assume that for all  in

in ![{\displaystyle [1..i]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b71f5f5e161659737c558413e7becd62cb33ce6a) ,

these three conditions are true of each

,

these three conditions are true of each  :

(i) each

:

(i) each  is nonzero,

(ii) each

is nonzero,

(ii) each  is a linear combination of

the vectors

is a linear combination of

the vectors  ,

and (iii) each

,

and (iii) each  is orthogonal to all of the

is orthogonal to all of the

's prior to it (that is, with

's prior to it (that is, with  ).

With those inductive hypotheses, consider

).

With those inductive hypotheses, consider  .

.

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{i+1}&={\vec {\beta }}_{i+1}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\beta _{i+1}})-{\mbox{proj}}_{[{\vec {\kappa }}_{2}]}({\beta _{i+1}})-\dots -{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({\beta _{i+1}})\\&={\vec {\beta }}_{i+1}-{\frac {\beta _{i+1}\cdot {\vec {\kappa }}_{1}}{{\vec {\kappa }}_{1}\cdot {\vec {\kappa }}_{1}}}\cdot {\vec {\kappa }}_{1}-{\frac {\beta _{i+1}\cdot {\vec {\kappa }}_{2}}{{\vec {\kappa }}_{2}\cdot {\vec {\kappa }}_{2}}}\cdot {\vec {\kappa }}_{2}-\dots -{\frac {\beta _{i+1}\cdot {\vec {\kappa }}_{i}}{{\vec {\kappa }}_{i}\cdot {\vec {\kappa }}_{i}}}\cdot {\vec {\kappa }}_{i}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/96e8fb789bf5f09ba258b06a8db392519ee7f9e4)

By the inductive assumption (ii) we can expand each  into a linear combination of

into a linear combination of

The fractions are scalars so this is a linear combination of linear combinations of  . It is therefore just a linear combination of

. It is therefore just a linear combination of  . Now, (i) it cannot sum to the zero vector because the equation would then describe a nontrivial linear relationship among the

. Now, (i) it cannot sum to the zero vector because the equation would then describe a nontrivial linear relationship among the  's that are given as members of a basis (the relationship is nontrivial because the coefficient of

's that are given as members of a basis (the relationship is nontrivial because the coefficient of  is

is  ). Also, (ii) the equation gives

). Also, (ii) the equation gives  as a combination of

as a combination of  . Finally, for (iii), consider

. Finally, for (iii), consider  ; as in the

; as in the  case, the dot product of

case, the dot product of  with

with ![{\displaystyle {\vec {\kappa }}_{i+1}={\vec {\beta }}_{i+1}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {\beta }}_{i+1}})-\dots -{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {\beta }}_{i+1}})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/eb4342e32110b852a4c05ec1e3d21a7ce7640366) can be rewritten to give two kinds of terms,

can be rewritten to give two kinds of terms, ![{\displaystyle {\vec {\kappa }}_{j}\cdot \left({\vec {\beta }}_{i+1}-{\mbox{proj}}_{[{\vec {\kappa }}_{j}]}({{\vec {\beta }}_{i+1}})\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9773fd8e5d2a5987040c1e8437e5793ecf8e2371) (which is zero because the projection is orthogonal) and

(which is zero because the projection is orthogonal) and ![{\displaystyle {\vec {\kappa }}_{j}\cdot {\mbox{proj}}_{[{\vec {\kappa }}_{m}]}({{\vec {\beta }}_{i+1}})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/089ddd8193430b85920a51b95644c33551677198) with

with  and

and  (which is zero because by the hypothesis (iii) the vectors

(which is zero because by the hypothesis (iii) the vectors  and

and  are orthogonal).

are orthogonal).

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}1\\1\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}2\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}2\\1\end{pmatrix}})={\begin{pmatrix}2\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}2\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}{{\begin{pmatrix}1\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\begin{pmatrix}2\\1\end{pmatrix}}-{\frac {3}{2}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\begin{pmatrix}1/2\\-1/2\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/89d97e62ddbb1f3f7268d64c626986fc014e1ac0)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}0\\1\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}-1\\3\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}-1\\3\end{pmatrix}})={\begin{pmatrix}-1\\3\end{pmatrix}}-{\frac {{\begin{pmatrix}-1\\3\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}{{\begin{pmatrix}0\\1\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\3\end{pmatrix}}-{\frac {3}{1}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\0\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/16ebec0b5e7357f508c7dec77b4dfc3068487290)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}0\\1\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}-1\\0\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}-1\\0\end{pmatrix}})={\begin{pmatrix}-1\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}-1\\0\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}{{\begin{pmatrix}0\\1\end{pmatrix}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\0\end{pmatrix}}-{\frac {0}{1}}\cdot {\begin{pmatrix}0\\1\end{pmatrix}}={\begin{pmatrix}-1\\0\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4b546f7f1a59998fd2dcfe1fbd07b387a58539ec)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}2\\2\\2\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}1\\0\\-1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}1\\0\\-1\end{pmatrix}})={\begin{pmatrix}1\\0\\-1\end{pmatrix}}-{\frac {{\begin{pmatrix}1\\0\\-1\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}{{\begin{pmatrix}2\\2\\2\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}={\begin{pmatrix}1\\0\\-1\end{pmatrix}}-{\frac {0}{12}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}={\begin{pmatrix}1\\0\\-1\end{pmatrix}}\\{\vec {\kappa }}_{3}&={\begin{pmatrix}0\\3\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}0\\3\\1\end{pmatrix}})-{\mbox{proj}}_{[{\vec {\kappa }}_{2}]}({\begin{pmatrix}0\\3\\1\end{pmatrix}})={\begin{pmatrix}0\\3\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}{{\begin{pmatrix}2\\2\\2\end{pmatrix}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}}{{\begin{pmatrix}1\\0\\-1\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}\\&\quad ={\begin{pmatrix}0\\3\\1\end{pmatrix}}-{\frac {8}{12}}\cdot {\begin{pmatrix}2\\2\\2\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\0\\-1\end{pmatrix}}={\begin{pmatrix}-5/6\\5/3\\-5/6\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/533b3e46f7617f45173e26fbaddcd013a459a3d6)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}1\\-1\\0\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}0\\1\\0\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}0\\1\\0\end{pmatrix}})={\begin{pmatrix}0\\1\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\-1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}={\begin{pmatrix}0\\1\\0\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}={\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}\\{\vec {\kappa }}_{3}&={\begin{pmatrix}2\\3\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}2\\3\\1\end{pmatrix}})-{\mbox{proj}}_{[{\vec {\kappa }}_{2}]}({\begin{pmatrix}2\\3\\1\end{pmatrix}})\\&={\begin{pmatrix}2\\3\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}2\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\-1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}2\\3\\1\end{pmatrix}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}}{{\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}\\&\quad ={\begin{pmatrix}2\\3\\1\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\-1\\0\end{pmatrix}}-{\frac {5/2}{1/2}}\cdot {\begin{pmatrix}1/2\\1/2\\0\end{pmatrix}}={\begin{pmatrix}0\\0\\1\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4d80cf98218710d605311cdc628c9f0bb63b3a54)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}1\\1\\0\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}-1\\0\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}-1\\0\\1\end{pmatrix}})={\begin{pmatrix}-1\\0\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}-1\\0\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}={\begin{pmatrix}-1\\0\\1\end{pmatrix}}-{\frac {-1}{2}}\cdot {\begin{pmatrix}1\\1\\0\end{pmatrix}}={\begin{pmatrix}-1/2\\1/2\\1\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/74aaab48f0b91844cc6ed81b2e6e7f03e456abda)

![{\displaystyle {\begin{array}{*{4}{rc}r}x&-&y&-&z&+&w&=&0\\x&&&+&z&&&=&0\end{array}}\;{\xrightarrow[{}]{-\rho _{1}+\rho _{2}}}\;{\begin{array}{*{4}{rc}r}x&-&y&-&z&+&w&=&0\\&&y&+&2z&-&w&=&0\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8ccdd18fc5d4c51274d12599d418fb0a3dc3a948)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}0\\1\\0\\1\end{pmatrix}})={\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}-{\frac {{\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}}{{\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}={\begin{pmatrix}0\\1\\0\\1\end{pmatrix}}-{\frac {-2}{6}}\cdot {\begin{pmatrix}-1\\-2\\1\\0\end{pmatrix}}={\begin{pmatrix}-1/3\\1/3\\1/3\\1\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c7233578479e844f787f86beba76edc9cd3c273)

![{\displaystyle {\vec {\kappa }}_{1}={\begin{pmatrix}1\\2\end{pmatrix}}\qquad {\vec {\kappa }}_{2}={\begin{pmatrix}3\\6\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}3\\6\end{pmatrix}})={\begin{pmatrix}0\\0\end{pmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2417ad170c7e1941894f60f4f6d44ad4f5a9de4b)

![{\displaystyle {\vec {v}}-({\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+\dots +{\mbox{proj}}_{[{\vec {v}}_{k}]}({{\vec {v}}\,}))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6f5c4fb926fb1bbd888113edd0218d76777ca04f)

![{\displaystyle {\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+\dots +{\mbox{proj}}_{[{\vec {v}}_{k}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9b6b75d22323cf78e846ce3ce8f71e08d74d76c4)

![{\displaystyle {\vec {\kappa }}_{i}\cdot \left({\vec {v}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})-\dots -{\mbox{proj}}_{[{\vec {v}}_{k}]}({{\vec {v}}\,})\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/41da96fb982d0b9d64ac9e2e97a1ff55c4e061e0)

![{\displaystyle -{\vec {\kappa }}_{i}\cdot {\mbox{proj}}_{[{\vec {\kappa }}_{j}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/77bedca8b82f85c98eec73ae6e2cc5fe4c672b39)

![{\displaystyle {\vec {\kappa }}_{i}\cdot ({\vec {v}}-{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {v}}\,}))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8513301846f08563f835824ebf14279dd2099a97)

![{\displaystyle {\vec {\kappa }}_{i}\cdot ({\vec {v}}-{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {v}}\,}))={\vec {\kappa }}_{i}\cdot {\vec {v}}-{\vec {\kappa }}_{i}\cdot \left(({\vec {v}}\cdot {\vec {\kappa }}_{i})/({\vec {\kappa }}_{i}\cdot {\vec {\kappa }}_{i})\right)\cdot {\vec {\kappa }}_{i}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/76cfb27fa9f2c4004f022aed593698c64332993d)

![{\displaystyle {\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+{\mbox{proj}}_{[{\vec {v}}_{2}]}({{\vec {v}}\,})=1\cdot {\vec {e}}_{1}+2\cdot {\vec {e}}_{2}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c783e2fefc8971ebde02538b7e0d3eae89c5632)

![{\displaystyle {\vec {v}}-({\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+{\mbox{proj}}_{[{\vec {v}}_{2}]}({{\vec {v}}\,}))}](https://wikimedia.org/api/rest_v1/media/math/render/svg/78258dc89c65d0f5d28d5638ad855e1c6f40a203)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{1}&={\begin{pmatrix}1\\5\\-1\end{pmatrix}}\\{\vec {\kappa }}_{2}&={\begin{pmatrix}2\\2\\0\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}2\\2\\0\end{pmatrix}})={\begin{pmatrix}2\\2\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}2\\2\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}}{{\begin{pmatrix}1\\5\\-1\end{pmatrix}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}\\&\quad ={\begin{pmatrix}2\\2\\0\end{pmatrix}}-{\frac {12}{27}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}={\begin{pmatrix}14/9\\-2/9\\4/9\end{pmatrix}}\\{\vec {\kappa }}_{3}&={\begin{pmatrix}1\\0\\0\end{pmatrix}}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\begin{pmatrix}1\\0\\0\end{pmatrix}})-{\mbox{proj}}_{[{\vec {\kappa }}_{2}]}({\begin{pmatrix}1\\0\\0\end{pmatrix}})\\&\quad ={\begin{pmatrix}1\\0\\0\end{pmatrix}}-{\frac {{\begin{pmatrix}1\\0\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}}{{\begin{pmatrix}1\\5\\-1\end{pmatrix}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}-{\frac {{\begin{pmatrix}1\\0\\0\end{pmatrix}}\cdot {\begin{pmatrix}14/9\\-2/9\\4/9\end{pmatrix}}}{{\begin{pmatrix}14/9\\-2/9\\4/9\end{pmatrix}}\cdot {\begin{pmatrix}14/9\\-2/9\\4/9\end{pmatrix}}}}\cdot {\begin{pmatrix}14/9\\-2/9\\4/9\end{pmatrix}}\\&\quad ={\begin{pmatrix}1\\0\\0\end{pmatrix}}-{\frac {1}{27}}\cdot {\begin{pmatrix}1\\5\\-1\end{pmatrix}}-{\frac {7}{12}}\cdot {\begin{pmatrix}14/9\\-2/9\\4/9\end{pmatrix}}={\begin{pmatrix}1/18\\-1/18\\-4/18\end{pmatrix}}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0ed178c79a2eae503e08078dfb2ed0d34bc01bb3)

![{\displaystyle [{{\vec {\beta }}_{1}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/559f68d79158bda36daf673222e59c03278ace09)

![{\displaystyle [{{\vec {\beta }}_{2}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/715e3540cc050343c92385c8b5cf4736c2633ff9)

![{\displaystyle {\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4750ad71c62bcd218538689eec0351b9990cef66)

![{\displaystyle {\vec {v}}={\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {v}}\,})+\dots +{\mbox{proj}}_{[{\vec {\kappa }}_{k}]}({{\vec {v}}\,})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1f14a7fab8047e6f3be3e5da8f2e419199ce045e)

![{\displaystyle {\mbox{proj}}_{[{\vec {\beta }}_{1}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}{{\begin{pmatrix}1\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\frac {5}{2}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}\qquad {\mbox{proj}}_{[{\vec {\beta }}_{2}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}}{{\begin{pmatrix}1\\0\end{pmatrix}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}={\frac {2}{1}}\cdot {\begin{pmatrix}1\\0\end{pmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4aeaf75a1f57cdf79f56ac25932ada69565ecec2)

![{\displaystyle {\mbox{proj}}_{[{\vec {\beta }}_{1}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}{{\begin{pmatrix}1\\1\end{pmatrix}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}={\frac {5}{2}}\cdot {\begin{pmatrix}1\\1\end{pmatrix}}\qquad {\mbox{proj}}_{[{\vec {\beta }}_{2}]}({\begin{pmatrix}2\\3\end{pmatrix}})={\frac {{\begin{pmatrix}2\\3\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}}{{\begin{pmatrix}1\\-1\end{pmatrix}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}={\frac {-1}{2}}\cdot {\begin{pmatrix}1\\-1\end{pmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2b19de0f4c5c12cf5d4263a9dc7623a5adb49463)

![{\displaystyle [1..i]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b71f5f5e161659737c558413e7becd62cb33ce6a)

![{\displaystyle {\begin{array}{rl}{\vec {\kappa }}_{i+1}&={\vec {\beta }}_{i+1}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({\beta _{i+1}})-{\mbox{proj}}_{[{\vec {\kappa }}_{2}]}({\beta _{i+1}})-\dots -{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({\beta _{i+1}})\\&={\vec {\beta }}_{i+1}-{\frac {\beta _{i+1}\cdot {\vec {\kappa }}_{1}}{{\vec {\kappa }}_{1}\cdot {\vec {\kappa }}_{1}}}\cdot {\vec {\kappa }}_{1}-{\frac {\beta _{i+1}\cdot {\vec {\kappa }}_{2}}{{\vec {\kappa }}_{2}\cdot {\vec {\kappa }}_{2}}}\cdot {\vec {\kappa }}_{2}-\dots -{\frac {\beta _{i+1}\cdot {\vec {\kappa }}_{i}}{{\vec {\kappa }}_{i}\cdot {\vec {\kappa }}_{i}}}\cdot {\vec {\kappa }}_{i}\end{array}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/96e8fb789bf5f09ba258b06a8db392519ee7f9e4)

![{\displaystyle {\vec {\kappa }}_{i+1}={\vec {\beta }}_{i+1}-{\mbox{proj}}_{[{\vec {\kappa }}_{1}]}({{\vec {\beta }}_{i+1}})-\dots -{\mbox{proj}}_{[{\vec {\kappa }}_{i}]}({{\vec {\beta }}_{i+1}})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/eb4342e32110b852a4c05ec1e3d21a7ce7640366)

![{\displaystyle {\vec {\kappa }}_{j}\cdot \left({\vec {\beta }}_{i+1}-{\mbox{proj}}_{[{\vec {\kappa }}_{j}]}({{\vec {\beta }}_{i+1}})\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9773fd8e5d2a5987040c1e8437e5793ecf8e2371)

![{\displaystyle {\vec {\kappa }}_{j}\cdot {\mbox{proj}}_{[{\vec {\kappa }}_{m}]}({{\vec {\beta }}_{i+1}})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/089ddd8193430b85920a51b95644c33551677198)