Sensory Systems/Auditory Signal Processing

Auditory Signal Processing

[edit | edit source]Now that the anatomy of the auditory system has been sketched out, this topic goes deeper into the physiological processes which take place while perceiving acoustic information and converting this information into data that can be handled by the brain. Hearing starts with pressure waves hitting the auditory canal and is finally perceived by the brain. This section details the process transforming vibrations into perception.

Effect of the head

[edit | edit source]Sound waves with a wavelength shorter than the head produce a sound shadow on the ear further away from the sound source. When the wavelength is longer than the head, diffraction of the sound leads to approximately equal sound intensities on both ears.

Sound reception at the pinna

[edit | edit source]The pinna collects sound waves in air affecting sound coming from behind and the front differently with its corrugated shape. The sound waves are reflected and attenuated or amplified. These changes will later help sound localization.

In the external auditory canal, sounds between 3 and 12 kHz - a range crucial for human communication - are amplified. It acts as resonator amplifying the incoming frequencies.

Sound conduction to the cochlea

[edit | edit source]Sound that entered the pinna in form of waves travels along the auditory canal until it reaches the beginning of the middle ear marked by the tympanic membrane (eardrum). Since the inner ear is filled with fluid, the middle ear is kind of an impedance matching device in order to solve the problem of sound energy reflection on the transition from air to the fluid. As an example, on the transition from air to water 99.9% of the incoming sound energy is reflected. This can be calculated using:

with Ir the intensity of the reflected sound, Ii the intensity of the incoming sound and Zk the wave resistance of the two media ( Zair = 414 kg m-2 s-1 and Zwater = 1.48*106 kg m-2 s-1). Three factors that contribute the impedance matching are:

- the relative size difference between tympanum and oval window

- the lever effect of the middle ear ossicles and

- the shape of the tympanum.

The longitudinal changes in air pressure of the sound-wave cause the tympanic membrane to vibrate which, in turn, makes the three chained ossicles malleus, incus and stirrup oscillate synchronously. These bones vibrate as a unit, elevating the energy from the tympanic membrane to the oval window. In addition, the energy of sound is further enhanced by the areal difference between the membrane and the stapes footplate. The middle ear acts as an impedance transformer by changing the sound energy collected by the tympanic membrane into greater force and less excursion. This mechanism facilitates transmission of sound-waves in air into vibrations of the fluid in the cochlea. The transformation results from the pistonlike in- and out-motion by the footplate of the stapes which is located in the oval window. This movement performed by the footplate sets the fluid in the cochlea into motion.

Through the stapedius muscle, the smallest muscle in the human body, the middle ear has a gating function: contracting this muscle changes the impedance of the middle ear, thus protecting the inner ear from damage through loud sounds.

Frequency analysis in the cochlea

[edit | edit source]The three fluid-filled compartements of the cochlea (scala vestibuli, scala media, scala tympani) are separated by the basilar membrane and the Reissner’s membrane. The function of the cochlea is to separate sounds according to their spectrum and transform it into a neural code.

When the footplate of the stapes pushes into the perilymph of the scala vestibuli, as a consequence the membrane of Reissner bends into the scala media. This elongation of Reissner’s membrane causes the endolymph to move within the scala media and induces a displacement of the basilar membrane.

The separation of the sound frequencies in the cochlea is due to the special properties of the basilar membrane. The fluid in the cochlea vibrates (due to in- and out-motion of the stapes footplate) setting the membrane in motion like a traveling wave. The wave starts at the base and progresses towards the apex of the cochlea. The transversal waves in the basilar membrane propagate with

with μ the shear modulus and ρ the density of the material. Since width and tension of the basilar membrane change, the speed of the waves propagating along the membrane changes from about 100 m/s near the oval window to 10 m/s near the apex.

There is a point along the basilar membrane where the amplitude of the wave decreases abruptly. At this point, the sound wave in the cochlear fluid produces the maximal displacement (peak amplitude) of the basilar membrane. The distance the wave travels before getting to that characteristic point depends on the frequency of the incoming sound. Therefore each point of the basilar membrane corresponds to a specific value of the stimulating frequency. A low-frequency sound travels a longer distance than a high-frequency sound before it reaches its characteristic point. Frequencies are scaled along the basilar membrane with high frequencies at the base and low frequencies at the apex of the cochlea.

Identifying frequency by the location of the maximum displacement of the basilar membrane is called tonotopic encoding of frequency. It automatically solves two problems:

- It automatically parallelizes the subsequent processing of frequency. This tonotopic encoding is maintained all the way up to the cortex.

- Our nervous system transmits information with action potentials, which are limited to less than 500 Hz. Through tonotopic encoding, also higher frequencies can be accurately represented.

Sensory transduction in the cochlea

[edit | edit source]Most everyday sounds are composed of multiple frequencies. The brain processes the distinct frequencies, not the complete sounds. Due to its inhomogeneous properties, the basilar membrane is performing an approximation to a Fourier transform. The sound is thereby split into its different frequencies, and each hair cell on the membrane corresponds to a certain frequency. The loudness of the frequencies is encoded by the firing rate of the corresponding afferent fiber. This is due to the amplitude of the traveling wave on the basilar membrane, which depends on the loudness of the incoming sound.

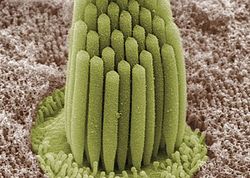

The sensory cells of the auditory system, known as hair cells, are located along the basilar membrane within the organ of Corti. Each organ of Corti contains about 16,000 such cells, innervated by about 30,000 afferent nerve fibers. There are two anatomically and functionally distinct types of hair cells: the inner and the outer hair cells. Along the basilar membrane these two types are arranged in one row of inner cells and three to five rows of outer cells. Most of the afferent innervation comes from the inner hair cells while most of the efferent innervation goes to the outer hair cells. The inner hair cells influence the discharge rate of the individual auditory nerve fibers that connect to these hair cells. Therefore inner hair cells transfer sound information to higher auditory nervous centers. The outer hair cells, in contrast, amplify the movement of the basilar membrane by injecting energy into the motion of the membrane and reducing frictional losses but do not contribute in transmitting sound information. The motion of the basilar membrane deflects the stereocilias (hairs on the hair cells) and causes the intracellular potentials of the hair cells to decrease (depolarization) or increase (hyperpolarization), depending on the direction of the deflection. When the stereocilias are in a resting position, there is a steady state current flowing through the channels of the cells. The movement of the stereocilias therefore modulates the current flow around that steady state current.

Let's look at the modes of action of the two different hair cell types separately:

- Inner hair cells:

The deflection of the hair-cell stereocilia opens mechanically gated ion channels that allow small, positively charged potassium ions (K+) to enter the cell and causing it to depolarize. Unlike many other electrically active cells, the hair cell itself does not fire an action potential. Instead, the influx of positive ions from the endolymph in scala media depolarizes the cell, resulting in a receptor potential. This receptor potential opens voltage gated calcium channels; calcium ions (Ca2+) then enter the cell and trigger the release of neurotransmitters at the basal end of the cell. The neurotransmitters diffuse across the narrow space between the hair cell and a nerve terminal, where they then bind to receptors and thus trigger action potentials in the nerve. In this way, neurotransmitter increases the firing rate in the VIIIth cranial nerve and the mechanical sound signal is converted into an electrical nerve signal.

The repolarization in the hair cell is done in a special manner. The perilymph in Scala tympani has a very low concentration of positive ions. The electrochemical gradient makes the positive ions flow through channels to the perilymph. (see also: Wikipedia Hair cell)

- Outer hair cells:

In humans' outer hair cells, the receptor potential triggers active vibrations of the cell body. This mechanical response to electrical signals is termed somatic electromotility and drives oscillations in the cell’s length, which occur at the frequency of the incoming sound and provide mechanical feedback amplification. Outer hair cells have evolved only in mammals. Without functioning outer hair cells the sensitivity decreases by approximately 50 dB (due to greater frictional losses in the basilar membrane which would damp the motion of the membrane). They have also improved frequency selectivity (frequency discrimination), which is of particular benefit for humans, because it enables sophisticated speech and music. (see also: Wikipedia Hair cell)

With no external stimulation, auditory nerve fibres discharge action potentials in a random time sequence. This random time firing is called spontaneous activity. The spontaneous discharge rates of the fibers vary from very slow rates to rates of up to 100 per second. Fibers are placed into three groups depending on whether they fire spontaneously at high, medium or low rates. Fibers with high spontaneous rates (> 18 per second) tend to be more sensitive to sound stimulation than other fibers.

Auditory pathway of nerve impulses

[edit | edit source]

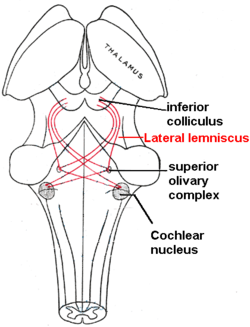

So in the inner hair cells the mechanical sound signal is finally converted into electrical nerve signals. The inner hair cells are connected to auditory nerve fibres whose nuclei form the spiral ganglion. In the spiral ganglion the electrical signals (electrical spikes, action potentials) are generated and transmitted along the cochlear branch of the auditory nerve (VIIIth cranial nerve) to the cochlear nucleus in the brainstem.

From there, the auditory information is divided into at least two streams:

- Ventral Cochlear Nucleus:

One stream is the ventral cochlear nucleus which is split further into the posteroventral cochlear nucleus (PVCN) and the anteroventral cochlear nucleus (AVCN). The ventral cochlear nucleus cells project to a collection of nuclei called the superior olivary complex.

Superior olivary complex: Sound localization

[edit | edit source]The superior olivary complex - a small mass of gray substance - is believed to be involved in the localization of sounds in the azimuthal plane (i.e. their degree to the left or the right). There are two major cues to sound localization: Interaural level differences (ILD) and interaural time differences (ITD). The ILD measures differences in sound intensity between the ears. This works for high frequencies (over 1.6 kHz), where the wavelength is shorter than the distance between the ears, causing a head shadow - which means that high frequency sounds hit the averted ear with lower intensity. Lower frequency sounds don't cast a shadow, since they wrap around the head. However, due to the wavelength being larger than the distance between the ears, there is a phase difference between the sound waves entering the ears - the timing difference measured by the ITD. This works very precisely for frequencies below 800 Hz, where the ear distance is smaller than half of the wavelength. Sound localization in the median plane (front, above, back, below) is helped through the outer ear, which forms direction-selective filters.

There, the differences in time and loudness of the sound information in each ear are compared. Differences in sound intensity are processed in cells of the lateral superior olivary complexm and timing differences (runtime delays) in the medial superior olivary complex. Humans can detect timing differences between the left and right ear down to 10 μs, corresponding to a difference in sound location of about 1 deg. This comparison of sound information from both ears allows the determination of the direction where the sound came from. The superior olive is the first node where signals from both ears come together and can be compared. As a next step, the superior olivary complex sends information up to the inferior colliculus via a tract of axons called lateral lemniscus. The function of the inferior colliculus is to integrate information before sending it to the thalamus and the auditory cortex. It is interesting to know that the superior colliculus close by shows an interaction of auditory and visual stimuli.

- Dorsal Cochlear Nucleus:

The dorsal cochlear nucleus (DCN) analyzes the quality of sound and projects directly via the lateral lemnisucs to the inferior colliculus.

From the inferior colliculus the auditory information from ventral as well as dorsal cochlear nucleus proceeds to the auditory nucleus of the thalamus which is the medial geniculate nucleus. The medial geniculate nucleus further transfers information to the primary auditory cortex, the region of the human brain that is responsible for processing of auditory information, located on the temporal lobe. The primary auditory cortex is the first relay involved in the conscious perception of sound.

Primary auditory cortex and higher order auditory areas

[edit | edit source]Sound information that reaches the primary auditory cortex (Brodmann areas 41 and 42). The primary auditory cortex is the first relay involved in the conscious perception of sound. It is known to be tonotopically organized and performs the basics of hearing: pitch and volume. Depending on the nature of the sound (speech, music, noise), is further passed to higher order auditory areas. Sounds that are words are processed by Wernicke’s area (Brodmann area 22). This area is involved in understanding written and spoken language (verbal understanding). The production of sound (verbal expression) is linked to Broca’s area (Brodmann areas 44 and 45). The muscles to produce the required sound when speaking are contracted by the facial area of motor cortex which are regions of the cerebral cortex that are involved in planning, controlling and executing voluntary motor functions.