Microprocessor Design/Print Version

| This is the print version of Microprocessor Design You won't see this message or any elements not part of the book's content when you print or preview this page. |

This book serves as an introduction to the field of microprocessor design and implementation. It is intended for students in computer science or computer or electrical engineering who are in the third or fourth years of an undergraduate degree. While the focus of this book will be on Microprocessors, many of the concepts will apply to other ASIC design tasks as well.

The reader should have prior knowledge in Digital Circuits and possibly some background in Semiconductors although it isn't strictly necessary. The reader also should know at least one Assembly Language. Knowledge of higher-level languages such as C or C++ may be useful as well, but are not required. Sections about soft-core design will require prior knowledge of Programmable Logic, and a prior knowledge of at least one HDL.

Introduction

About This Book

Computers and computer systems are a pervasive part of the modern world. Aside from just the common desktop PC, there are a number of other types of specialized computer systems that pop up in many different places. The central component of these computers and computer systems is the microprocessor, or the CPU. The CPU (short for "Central Processing Unit") is essentially the brains behind the computer system, it is the component that "computes". This book is going to discuss what microprocessor units do, how they do it, and how they are designed.

This book is going to discuss the design of microprocessor units, but it will not discuss the design of complete computer systems nor the design of other computer components or peripherals. Some microprocessor designs will be implemented and synthesized in Hardware Description Languages, such as Verilog or VHDL. The book will be organized to discuss simple designs and concepts first, and expand the initial designs to include more complicated concepts as the book progresses.

This book will attempt to discuss the basic concepts and theory of microprocessor design from an abstract level, and give real-world examples as necessary. This book will not focus on studying any particular processor architecture, although several of the most common architectures will appear frequently in examples and notes.

How Will This Book Be Organized?

The first section of the book will review computer architecture, and will give a brief overview of the components of a computer, the components of a microprocessor, and some of the basic architectures of modern microprocessors.

The second section will discuss in some detail the individual components of a microcontroller, what they do, and how they are designed.

The third section will focus in on the ALU and FPU, and will discuss implementation of particular mathematical operations.

The fourth section will discuss the various design paradigms, starting with the most simple single cycle machine to more complicated exotic architectures such as vector and VLIW machines.

Additional chapters will serve as extensions and support chapters for concepts discussed in the first four sections.

Prerequisites

This book will rely on some important background information that is currently covered in a number of other local wikibooks. Readers of this book will find the following prerequisites important to understand the material in this book:

All readers must be familiar with binary numbers and also hexadecimal numbers. These notations will be used throughout the book without any prior explanation. Readers of this book should be familiar with at least one assembly language, and should also be familiar with a hardware description language. This book will use both types of languages in the main narrative of the text without offering explanation beforehand. Appendices might be included that contain primers on this material.

Readers of this book will also find some pieces of software helpful in examples. Specifically, assemblers and assembly language simulators will help with many of the examples. Likewise, HDL compilers and simulators will be useful in the design examples. If free versions of these software programs can be found, links will be added in an appendix.

Who Is This Book For?

This book is designed to accompany an advanced undergraduate or graduate study in the field of microprocessor design. Students in the areas of Electrical Engineering, Computer Engineering, or Computer Science will likely find this book to be the most useful. The basic subjects in this field will be covered, and more advanced topics will be included depending on the proficiencies of the authors. Many of the topics considered in this book will apply to the design of many different types of digital hardware, including ASICs. However, the main narrative of the book, and the ultimate goals of the book will be focused on microcontrollers and microprocessors, not other ASICs.

What This Book Will Not Cover

This book is about the design of micro-controllers and microprocessors only. This book will not cover the following topics in any detail, although some mention might be made of them as a matter of interest:

- Transistor mechanics, semiconductors, or integrated circuit fabrication (Microtechnology)

- Digital Circuit Logic, Design or Layout (Programmable Logic)

- Design or interfacing with other computer components or peripherals (Embedded Systems)

- Design or implementation of communication protocols used to communicate between computer components (Serial Programming)

- Design or creation of computer software (Computer Programming)

- Design of System-on-a-Chip hardware or any device with an integrated micro-controller

- How to select an off-the-shelf CPU (How To Assemble A Desktop PC/Choosing the parts/CPU)

Terminology

Throughout the book, the words "Microprocessor", "Microcontroller", "Processor", and "CPU" will all generally be used interchangeably to denote a digital processing element capable of performing arithmetic and quantitative comparisons. We may differentiate between these terms in individual sections, but an explanation of the differences will always be provided.

Microprocessor Basics

Microprocessors

Microprocessors

Microprocessors are the devices in a computer which make things happen. Microprocessors are capable of performing basic arithmetic operations, moving data from place to place, and making basic decisions based on the quantity of certain values.

Types of Processors

The vast majority of microprocessors can be found in embedded high microcontrollers. The second most common type of processors are common desktop processors, such as Intel's Pentium or AMD's Athlon. Less common are the extremely powerful processors used in high-end servers, such as Sun's SPARC, IBM's Power, or Intel's Itanium.

Historically, microprocessors and microcontrollers have come in "standard sizes" of 8 bits, 16 bits, 32 bits, and 64 bits. These sizes are common, but that does not mean that other sizes are not available. Some microcontrollers (usually specially designed embedded chips) can come in other "non-standard" sizes such as 4 bits, 12 bits, 18 bits, or 24 bits. The number of bits represent how much physical memory can be directly addressed by the CPU. It also represents the amount of bits that can be read by one read/write operation. In some circumstances, these are different; for instance, many 8 bit microprocessors have an 8 bit data bus and a 16 bit address bus.

- 8 bit processors can read/write 1 byte at a time and can directly address 256 bytes

- 16 bit processors can read/write 2 bytes at a time, and can address 65,536 bytes (64 Kilobytes)

- 32 bit processors can read/write 4 bytes at a time, and can address 4,294,967,295 bytes (4 Gigabytes)

- 64 bit processors can read/write 8 bytes at a time, and can address 18,446,744,073,709,551,616 bytes (16 Exabytes)

General Purpose Versus Specific Use

Microprocessors that are capable of performing a wide range of tasks are called general purpose microprocessors. General purpose microprocessors are typically the kind of CPUs found in desktop computer systems. These chips typically are capable of a wide range of tasks (integer and floating point arithmetic, external memory interface, general I/O, etc). We will discuss some of the other types of processor units available:

- General Purpose

- A general purpose processing unit, typically referred to as a "microprocessor" is a chip that is designed to be integrated into a larger system with peripherals and external RAM. These chips can typically be used with a very wide array of software.

- DSP

- A Digital Signal Processor, or DSP for short, is a chip that is specifically designed for fast arithmetic operations, especially addition and multiplication. These chips are designed with processing speed in mind, and don't typically have the same flexibility as general purpose microprocessors. DSPs also have special address generation units that can manage circular buffers, perform bit-reversed addressing, and simultaneously access multiple memory spaces with little to no overhead. They also support zero-overhead looping, and a single-cycle multiply-accumulate instruction. They are not typically more powerful than general purpose microprocessors, but can perform signal processing tasks using far less power (as in watts).

- Embedded Controller

- Embedded controllers, or "microcontrollers" are microprocessors with additional hardware integrated into a single chip. Many microcontrollers have RAM, ROM, A/D and D/A converters, interrupt controllers, timers, and even oscillators built into the chip itself. These controllers are designed to be used in situations where a whole computer system isn't available, and only a small amount of simple processing needs to be performed.

- Programmable State Machines

- The most simplistic of processors, programmable state machines are a minimalist microprocessor that is designed for very small and simple operations. PSMs typically have very small amount of program ROM available, limited scratch-pad RAM, and they are also typically limited in the type and number of instructions that they can perform. PSMs can either be used stand-alone, or (more frequently) they are embedded directly into the design of a larger chip.

- Graphics Processing Units

- Computer graphics are so complicated that functions to process the visuals of video and game applications have been offloaded to a special type of processor known as a GPU. GPUs typically require specialized hardware to implement matrix multiplications and vector arithmetic. GPUs are typically also highly parallelized, performing shading calculations on multiple pixels and surfaces simultaneously.

Types of Use

Microcontrollers and Microprocessors are used for a number of different types of applications. People may be the most familiar with the desktop PC, but the fact is that desktop PCs make up only a small fraction of all microprocessors in use today. We will list here some of the basic uses for microprocessors:

- Signal Processing

- Signal processing is an area that demands high performance from microcontroller chips to perform complex mathematical tasks. Signal processing systems typically need to have low latency, and are very deadline driven. An example of a signal processing application is the decoding of digital television and radio signals.

- Real Time Applications

- Some tasks need to be performed so quickly that even the slightest delay or inefficiency can be detrimental. These applications are known as "real time systems", and timing is of the utmost importance. An example of a real-time system is the anti-lock braking system (ABS) controller in modern automobiles.

- Throughput and Routing

- Throughput and routing is the use of a processor where data is moved from one particular input to an output, without necessarily requiring any processing. An example is an internet router, that reads in data packets and sends them out on a different port.

- Sensor monitoring

- Many processors, especially small embedded processors are used to monitor sensors. The microprocessor will either digitize and filter the sensor signals, or it will read the signals and produce status outputs (the sensor is good, the sensor is bad). An example of a sensor monitoring processor is the processor inside an antilock brake system: This processor reads the brake sensor to determine when the brakes have locked up, and then outputs a control signal to activate the rest of the system.

- General Computing

- A general purpose processor is like the kind of processor that is typically found inside a desktop PC. Names such as Intel and AMD are typically associated with this type of processor, and this is also the kind of processor that the public is most familiar with.

- Graphics

- Processing of digital graphics is an area where specialized processor units are frequently employed. With the advent of digital television, graphics processors are becoming more common. Graphics processors need to be able to perform multiple simultaneous operations. In digital video, for instance, a million pixels or more will need to be processed for every single frame, and a particular signal may have 60 frames per second! To the benefit of graphics processors, the color value of a pixel is typically not dependent on the values of surrounding pixels, and therefore many pixels can typically be computed in parallel.

Abstraction Layers

Computer systems are developed in layers known as layers of abstraction. Layers of abstraction allow people to develop computer components (hardware and software) without having to worry about the internal design of the other layers in the system. At the highest level are the user-interface programs that people use on their computers. At the lowest level are the transistor layouts of the individual computer components. Some of the layers in a computer system are (listed from highest to lowest):

- Application

- Operating System

- Firmware

- Instruction Set Architecture

- Microprocessor Control Logic

- Physical Circuit Layout

This book will be mostly concerned with the Instruction Set Architecture (ISA), and the Microprocessor Control Logic but we will also describe the Operating System (OS) in brief. Topics above these are typically the realm of computer programmers. The bottom layer, the Physical Circuit Layout is the job of hardware and VLSI engineers.

Operating System

Operating System is a program which acts as an interface between the system user and the computer hardware and controls the execution of application programs. It is the program running at all times on the computer, usually called the Kernel.

ISA

The Instruction Set Architecture is a long name for the assembly language of a particular machine, and the associated machine code for that assembly language. We will discuss this below.

Assembly Language

An assembly language is a small language that contains a short word or "mnemonic" for each individual command that a microcontroller can follow. Each command gets a single mnemonic, and each mnemonic corresponds to a single machine command. Assembly language gets converted (by a program called an "assembler") into the binary machine code. The machine code is specific to each different type of machine.

Common ISAs

Some of the most common ISAs, listed in order of popularity (most popular first) are:

- ARM

- IA-32 (Intel x86)

- MIPS

- Motorola 68K

- PowerPC

- Hitachi SH

- SPARC

- RISC-V

Moore's Law

A common law that governs the world of microprocessors is Moore's Law. Moore's Law, originally by Dr. Carver Mead at Caltech, and summarized famously by Intel Founder Gordon Moore. Moore's Law states that the number of transistors on a single chip at the same price will double every 18 to 24 months. This law has held without fail since it was originally stated in 1965. Current microprocessor chips contain millions of transistors and the number is growing rapidly. Here is Moore's summarization of the law from Electronics Magazine in 1965:

The complexity for minimum component costs has increased at a rate of roughly a factor of two per year...Certainly over the short term this rate can be expected to continue, if not to increase. Over the longer term, the rate of increase is a bit more uncertain, although there is no reason to believe it will not remain nearly constant for at least 10 years. That means by 1975, the number of components per integrated circuit for minimum cost will be 65,000. I believe that such a large circuit can be built on a single wafer.—Gordon Moore

Moore's Law has been used incorrectly to calculate the speed of an integrated circuit, or even to calculate its power consumption, but neither of these interpretations are true. Also, Moore's law is talking about the number of transistors on a chip for a "minimum component cost", which means that the number of transistors on a chip, for the same price, will double. This goes to show that chips for less price can have fewer transistors, and that chips at a higher price can have more transistors. On an economic note, a consequence of Moore's Law is that companies need to continue to innovate and integrate more transistors onto a single chip, without being able to increase prices.

Moore's Law does not require that the speed of the chip increase along with the number of transistors on the chip. However, the two measurements are typically related. Some points to keep in mind about transistors and Moore's Law are:

- Smaller Transistors typically switch faster than larger transistors.

- To get more transistors on a single chip, the chip needs to be made larger, or the transistors need to be made smaller. Typically, the transistors get smaller.

- Transistors tend to leak electrical current as they get smaller. This means that smaller transistors require more power to operate, and they generate more heat.

- Transistors tend to generate heat as a function of frequencies. Higher clock rates tend to generate more heat.

Moore's law is occasionally misinterpreted to mean that the speed of processors, in hertz will double every 18 months. This is not strictly true, although the speed of processors does tend to increase as transistors are made smaller and more compact. With the advent of multi-core processors, some people have used Moore's law to mean that processor throughput increases with time, which is not strictly the case either (although it is a likely side effect of Moore's law).

Clock Rates

Microprocessors are typically discussed in terms of their clock speed. The clock speed is measured in hertz (or megahertz, or gigahertz). A hertz is a "cycle per second". Each cycle, a microprocessor will perform certain tasks, although the amount of work performed in a single cycle will be different for different types of processors. The amount of work that a processor can complete in a single cycle is measured in "instructions per cycle" . For some systems, such as MIPS, there is 1 instruction per cycle. For other systems, such as modern x86 chips, there are typically very many instructions per cycle. The clock rate is equated as such:

This means that the amount of time for a cycle is inversely proportional to the clock rate. A computer with a 1MHz clock rate will have a clock time of 1 microsecond. A modern desktop computer with a 3.2 GHz processor will have a clock time of approximately 3× 10-10 seconds, or 300 picoseconds. 300 picoseconds is an incredibly small amount of time, and there is a lot that needs to happen inside the processor in each clock cycle.

Basic Elements of a Computer

There are a few basic elements that are common to all computers. These elements are:

- CPU

- Memory

- Input Devices

- Output Devices

Depending on the particular computer architecture, these elements may be available in various sizes, and they may be accompanied by additional elements.

Computer Architecture

Von Neumann Architecture

Early on in the days of computer science, computer programs were hard-wired, only using memory to store data. Reprogramming computers involved changing hardware switches manually, taking ridiculous amounts of time and having a high potential for coding errors. As a workaround to these problems, mathematician and computer scientist John von Neumann proposed what is now known as the von Neumann architecture, which stores programs in memory, thereby avoiding the need to hard-wire them.

Microprocessor Execution

In a von Neumann architecture, a circuit called a microprocessor is used to process program instructions and execute them. To execute a program, the microprocessor first fetches a programs' instructions from memory and the data necessary to run them. Then, the microprocessor decodes and separates the instructions and data and activates the necessary components and pathways needed to run the program. Finally, the microprocessor executes the program, running through the instructions, manipulating the data, and storing the results.

Control Units and Datapaths

This three-step process of fetching, decoding, and executing is typically implemented with two hardware components: a control unit and a datapath. If data is thought of as water, then the datapath acts as a canal with many branches, and the control unit acts as a series of locks. The control unit reads instructions fetched from memory and uses them to direct where data flows in the datapath. Along the way, different branches of the datapath will contain different mechanisms for modifying and transforming the data flowing through it. These mechanisms are termed Arithmetic Logic Units (ALUs) (discussed in more depth later on), and they perform arithmetic operations (such as addition, subtraction, shifting, and inverting) and logic operations (such as AND and OR) on data flowing through the datapath.

A more nuanced discussion of control units and datapaths will be had in a later section, conveniently titled Control and Datapath.

Harvard Architecture

In a Harvard Architecture machine, the computer system's memory is separated into two discrete parts: data and instructions. In a pure Harvard system, the two different memories occupy separate memory modules, and instructions can only be executed from the instruction memory.

Many DSPs are modified Harvard architectures, designed to simultaneously access three distinct memory areas: the program instructions, the signal data samples, and the filter coefficients (often called the P, X, and Y memories).

In theory, such three-way Harvard architectures can be three times as fast as a Von Neumann architecture that is forced to read the instruction, the data sample, and the filter coefficient, one at a time.

Modern Computers

Modern desktop computers, especially computers based on the Intel x86 ISA are not Harvard computers, although the newer variants have features that are "Harvard-Like". All information, program instructions, and data are stored in the same RAM areas. However, a modern feature called "paging" allows the physical memory to be segmented into large blocks of memory called "pages". Each page of memory can either be instructions or data, but not both.

Modern embedded computers, however, are typically based on a Harvard architecture. Instructions are stored in a different addressable memory block than the data is, and there is no way for the microprocessor to interchange data and instructions.

RISC and CISC and DSP

Historically, the first type of ISA (Instruction Set Architecture) was the complex instruction set computers (CISC), and the second type was the reduced instruction set computers (RISC). It is a common misunderstanding that RISC systems typically have a small ISA (fewer instructions) but make up for it with faster hardware. RISC system actually have "reduced instructions", in the sense that each instruction does so little that it takes very little time to execute it. It is a common misunderstanding that CISC systems have more instructions, but typically pay a steep performance penalty for the added versatility. CISC systems actually have "complex instructions", in the sense that at least one instruction takes a long time to execute -- for example, the "double indirect" addressing mode inherently requires two memory cycles to execute, and a few CPUs have a "string copy" instruction that may require hundreds of memory cycles to execute. MIPS and SPARC are examples of RISC computers. Intel x86 is an example of a CISC computer.

Some people group stack machines with the RISC machines; others[8] group stack machines with the CISC machines; some people [9], [10] describe stack machines as neither RISC nor CISC.

Other ISA types include DSPs, stack machines, VLIW machines, MISC machines, TTA architectures, massively parallel processor arrays, etc.

We will discuss these terms and concepts in more detail later.

Microprocessor Components

Some of the common components of a microprocessor are:

- Control Unit

- I/O Units

- Arithmetic Logic Unit (ALU)

- Registers

- Cache

A brief introduction to these components is placed below.

Control processer Unit

The control processer unit, as described above, reads the instructions, and generates the necessary digital signals to operate the other components. An instruction to add two numbers together would cause the Control Unit to activate the addition module, for instance.

I/O Units

The processor needs to be able to communicate with the rest of the computer system. This communication occurs through the I/O ports. The I/O ports will interface with the system memory (RAM), and also the other peripherals of a computer.

ALU

The Arithmetic Logic Unit, or ALU is the part of the microprocessor that performs arithmetic operations. ALUs can typically add, subtract, divide, multiply, and perform the logical operations of two numbers (and, or, nor, not, etc).

ALU will be discussed in far more detail in a later chapter, ALU.

Registers

There are different kinds of registers. Hopefully it will be obvious which kind of register we are talking about from the context.

The most general meaning is a "hardware register": anything that can be used to store bits of information, in a way that all the bits of the register can be written to or read out simultaneously. Since registers outside of a CPU are also outside the scope of the book, this book will only discuss processor registers, which are hardware registers that happen to be inside a CPU. But usually we will refer to a more specific kind of register.

Registers are mentioned in far more detail in a later chapter, Register File.

programmer-visible registers

The programmer-visible registers, also called the user-accessible registers, also called the architectural registers, often simply called "the registers", are the registers that are directly encoded as part of at least one instruction in the instruction set.

The registers are the fastest accessible memory locations, and because they are so fast, there are typically very few of them. In most processors, there are fewer than 32 registers. The size of the registers defines the size of the computer. For instance, a "32 bit computer" has registers that are 32 bits long. The length of a register is known as the word length of the computer.

There are several factors limiting the number of registers, including:

- It is very convenient for a new CPU to be software-compatible with an old CPU. This requires the new chip to have exactly the same number of programmer-visible registers as the old chip.

- Doubling the number general-purpose registers requires adding another bit to each instruction that selects a particular register. Each 3-operand instruction (that specify 2 source operands and a destination operand) would expand by 3 bits. Modern chip manufacturing processes could put a million registers on a chip; that would make each and every 3-operand instruction require 60 bits just to select the registers, not counting the bits required to specify what to do with those operands.

- Adding more registers adds more wires to the critical path, adding capacitance, which reduces the maximum clock speed of the CPU.

- Historically, CPUs were designed with few registers, because each additional register increased the cost of the CPU significantly. But now that modern chip manufacturing can put tens of millions of bits of storage on a single commodity CPU chip, this is less of an issue.

Microprocessors typically contain a large number of registers, but only a small number of them are accessible by the programmer. The registers that can be used by the programmer to store arbitrary data, as needed, are called general purpose registers. Registers that cannot be accessed by the programmer directly are known as reserved registers[citation needed].

Some computers have highly specialized registers -- memory addresses always came from the program counter or "the" index register or "the" stack pointer; one ALU input was always hooked to data coming from memory, the other ALU input was always hooked to "the" accumulator; etc.

Other computers have more general-purpose registers -- any instruction that access memory can use any address register as a index register or as a stack pointer; any instruction that uses the ALU can use any data register.

Other computers have completely general-purpose registers -- any register can be used as data or an address in any instruction, without restriction.

microarchitectural registers

Besides the programmer-visible registers, all CPUs have other registers that are not programmer-visible, called "microarchitectural registers" or "physical registers".

These registers include:

- memory address register

- memory data register

- instruction register

- microinstruction register

- microprogram counter

- pipeline registers

- extra physical registers to support register renaming

- the prefetch input queue

- writable control stores (We will discuss the control store in the Microprocessor Design/Control Unit and Microprocessor Design/Microcode)

- Some people consider on-chip cache to be part of the microarchitectural registers; others consider it "outside" the CPU.

There are a wide variety of ways to implement any one instruction set. The vast majority of these microarchitectural registers are technically not "necessary". A designer could choose to design a CPU that had almost no physical registers other than the programmer-visible registers. However, many designers choose to design a CPU with lots of physical registers, using them in ways that make the CPU execute the same given instruction set much faster than a CPU that lacks those registers.

Cache

Most CPUs manufactured do not have any cache.

Cache is memory that is located on the chip, but that is not considered registers. The cache is used because reading external memory is very slow (compared to the speed of the processor), and reading a local cache is much faster. In modern processors, the cache can take up as much as 50% or more of the total area of the chip. The following table shows the relationship between different types of memory:

| smallest | largest | |

| Registers | cache | RAM |

| fastest | slowest |

Cache typically comes in 2 or 3 "levels", depending on the chip. Level 1 (L1) cache is smaller and faster than Level 2 (L2) cache, which is larger and slower. Some chips have Level 3 (L3) cache as well, which is larger still than the L2 cache (although L3 cache is still much faster than external RAM).

We discuss cache in far more detail in a later chapter, Cache.

Endian

Different computers order their multi-byte data words (i.e., 16-, 32-, or 64-bit words) in different ways in RAM. Each individual byte in a multi-byte word is still separately addressable. Some computers order their data with the most significant byte of a word in the lowest address, while others order their data with the most significant byte of a word in the highest address. There is logic behind both approaches, and this was formerly a topic of heated debate.

This distinction is known as endianness. Computers that order data with the least significant byte in the lowest address are known as "Little Endian", and computers that order the data with the most significant byte in the lowest address are known as "Big Endian". It is easier for a human (typically a programmer) to view multi-word data dumped to a screen one byte at a time if it is ordered as Big Endian. However it makes more sense to others to store the LS data at the LS address.

When using a computer this distinction is typically transparent; that is that the user cannot tell the difference between computers that use the different formats. However, difficulty arises when different types of computers attempt to communicate with one another over a network.

With a big-endian 68K sort of machine,

address increases > ------ >

data : 74 65 73 74 00 00 00 05

is the string "test" followed by the 32-bit integer 5. The little-endian x86 sort of machine would interpret the last part as the integer 0x0500_0000.

When communicating over a network composed of both big-endian and little-endian machines, the network hardware (should) apply the Address Invariance principle, to avoid scrambling text (avoiding the NUXI problem). High-level software (should) format packets of data to be transmitted over the network in Network Byte Order. High-level software (should) be written as "endian clean" -- always reading and writing 16 bit integers as whole 16 bit integers, 32 bit integers as whole 32 bit integers, etc. -- so no changes are needed to re-compile it for big-endian or little-endian machines. Software that is not "endian clean" -- software that writes integers, but then reads them out as 8 bit octets or integers of some other length -- usually fails when re-compiled for another computer.

A few computers -- including nearly all DSPs -- are "neither-endian". They always read and write complete aligned words, and don't have any hardware for dealing with individual bytes. Systems build on top of such computers often *do* have a particular endianness -- but that endianness is written into the software, and can be switched by re-compiling for the opposite endianness.

Stack

A stack is a block of memory that is used as a scratchpad area. The stack is a sequential set of memory locations that is set to act like a LIFO (last in, first out) buffer. Data is added to the top of the stack in a "push" operation, and the top data item is removed from the stack during a "pop" operation. Most computer architectures include at least a register that is usually reserved for the stack pointer.

Some microprocessors include a small hardware stack built into the CPU, independent from the rest of the RAM.

Some people claim that a processor must have a hardware stack in order to run C programs.[1]

Most computer architectures have hardware support for a recursive "call" instruction in their Assembly Language. Some architectures (such as the ARM, the Freescale RS08, etc.) implement "call" like this:

- the "call" instruction pushes a return address into a link register and jumps to the subroutine. A separate instruction near the beginning of the subroutine pushes the contents of the link register to a stack in main memory, to free up the link register so that subroutine can then recursively call other subroutines.

Some architectures (such as the 6502, the x86, etc.) implement "call" like this:

- the "call" instruction pushes a return address onto the stack in main memory and jumps to the subroutine.

A few architectures (such as the PIC16, the RISC I processor, the Novix NC4016, many LISP machines, etc.) implement "call" like this:

- The "call" instruction pushes a return address into a dedicated return stack, separate from main memory, and jumps to the subroutine.

further reading

Instruction Set Architectures

ISAs

The instruction set or the instruction set architecture (ISA) is the set of basic instructions that a processor understands. The instruction set is a portion of what makes up an architecture.

Historically, the first two philosophies to instruction sets were: reduced (RISC) and complex (CISC). The merits and argued performance gains by each philosophy are and have been thoroughly debated.

CISC

Complex Instruction Set Computer (CISC) is rooted in the history of computing. Originally there were no compilers and programs had to be coded by hand one instruction at a time. To ease programming more and more instructions were added. Many of these instructions are complicated combination instructions such as loops. In general, more complicated or specialized instructions are inefficient in hardware, and in a typically CISC architecture the best performance can be obtained by using only the most simple instructions from the ISA.

The most well known/commoditized CISC ISAs are the Motorola 68k and Intel x86 architectures.

RISC

Reduced Instruction Set Computer (RISC) was realized in the late 1970s by IBM. Researchers discovered that most programs did not take advantage of all the various address modes that could be used with the instructions. By reducing the number of address modes and breaking down multi-cycle instructions into multiple single-cycle instructions several advantages were realized:

- compilers were easier to write (easier to optimize).

- performance is increased for programs that did simple operations.

- the clock rate can be increased since the minimum cycle time was determined by the longest running instruction.

The most well known/commoditized RISC ISAs are the PowerPC, ARM, MIPS and SPARC architectures.

VLIW

We will discuss VLIW processors in a later section.

Vector processors

We will discuss Vector processors in a later section.

Computational RAM

Computational RAM (C-RAM) is semiconductor random access memory with processors incorporated into the design to build an inexpensive massively-parallel computer.

Memory arrangement

Instructions are typically arranged sequentially in memory. Each instruction occupies 1 or more computer words. The Program Counter (PC) is a register inside the microprocessor that contains the address of the current instruction.[2] During the fetch cycle, the instruction from the address indicated by the program counter is read from memory into the instruction register (IR), and the program counter is incremented by n, where n is the word length of the machine (in bytes).

In addition to fetches of the executable instructions, many (but not all) instructions also fetch data values from memory ("load") into a data register, or write data values from a data register to memory ("store"). The address of the particular memory word accessed in such a load or store instruction is called the "effective address". In the simplest instruction sets, the effective address always contained in some address register. Other instruction sets have more complex "effective address" calculations — we will discuss such "addressing modes" later.

Common instructions

Move, Load, Store

Move instructions cause data from one register to be moved or copied to another register. Load instructions put data from an external source, such as memory, into a register. Store instructions move data from a register to an external destination.

Instructions that move (or copy) data from one place to another are the #1 most-frequently-used instructions in most programs.[3]

Branch and Jump

Branching and Jumping is the ability to load the PC register with a new address that is not the next sequential address. In general, a "jump" or "call" occurs unconditionally, and a "branch" occurs on a given condition. In this book we will generally refer to both as being branches, with a "jump" being an unconditional branch.

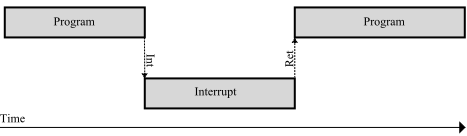

A "call" instruction is a branch instruction with the additional effect of storing the current address in a specific location, e.g. pushing it on the stack, to allow for easy return to continue execution. A "call" instruction is generally matches with a "return" instruction which retrieves the stored address and resumes execution where it left off.

An "interrupt" instruction is a call to a preset location, generally one encoded somehow in the instruction itself. This is often used to reach commonly-used resources such as the operating system. Generally, a routine entered via an interrupt instruction is left via an interrupt return instruction, which, similarly to the return instruction, retrieves the stored address and resumes execution.

In many programs, "call" is the second-most-frequently used instruction (after "move").[3]

Arithmetic instructions

The Arithmetic Logic Unit (ALU) is used to perform arithmetic and logical instructions. The capability of the ALU typically is greater with more advanced central processors, but RISC machines' ALUs are deliberately kept simple and so have only some of these functions. An ALU will, at minimum, perform addition, subtraction, NOT, AND, OR, and XOR, and usually also single-bit rotates and shifts. Many CISC machine ALUs can also perform multi-bit rotates and shifts (with a barrel shifter) and integer multiplication and division. While many modern CPUs can also do floating point mathematical operations, these are usually handled by the FPU, a different part of the machine. We describe the ALU in more detail in the ALU design chapter.

Input / Output

Input instructions fetch data from a specified input port, while output instructions send data to a specified output port. There is very little distinction between input/output space and memory space, the microprocessor presents an address and then either accepts data from, or sends data to, the data bus, but the sort of operations available in the input/output space are typically more limited than those available in memory space.

NOP

NOP, short for "no operation" is an instruction that produces no result and causes no side effects. NOPs are useful for timing and preventing hazards.

Instruction length

There are several different ways people balance the various advantages and disadvantages of various instruction lengths.

Fixed-length instructions are less complicated for a CPU to handle than variable-width instructions for several reasons, and are therefore somewhat easier to optimize for speed. Such reasons include: CPUs with variable-length instructions have to check whether each instruction straddles a cache line or virtual memory page boundary; CPUs with fixed-length instructions can skip all that.[4]

There simply are not enough bits in a 16-bit instruction to accommodate 32 general-purpose registers, and also do "Ra = Rb (op) Rc" – i.e., independently select 2 source and 1 destination register out of a general purpose register bank of 32 registers, and also independently select one of several ALU operations.

And so people who design instruction sets must make one or more of the following compromises:

- sacrifice code density and use longer fixed-width instructions, typically 32-bit, such as the MIPS and DLX and ARM.

- sacrifice fixed-width instructions, requiring a more complicated decoder to handle both short 16-bit instructions and longer 3-operand instructions, such as ARM Thumb.

- sacrifice 3-operands, using no more than 2 operands in all instructions for everything, such as the Atmel AVR. 3-operand instructions allow better reuse of data;[4] without 3-operand instructions, programs occasionally require extra copy instructions when both variable input operands to some ALU operation need to be preserved for some later instruction(s).

- sacrifice registers, so only 16 or 8 programmer-visible registers.

- sacrifice the concept of general purpose register -- perhaps only 16 or 8 "data registers" are visible to 3-operand ALU instructions, as in the 68000, or the destination is restricted to one or two "accumulators", but other registers (such as "address registers") are visible to other instructions.

Instruction format

Any one particular machine-language instruction for any one particular CPU can typically be divided up into fields. For example, certain bits in a "ADD" instruction indicate the operation -- that this is actually an "ADD" rather than a "XOR" or "subtract" instruction. Other bits indicate which register is the source, other bits indicate which register is the destination, etc.

A few processors not only have fixed instruction widths but also have single instruction format -- a fixed set of fields that is the same for every instruction.

Many processors have fixed instruction widths but have several instruction formats. The actual bits stored in a special fixed-location "instruction type" field (that is in the same place in every instruction for that CPU) indicates which of those instruction format is used by this specific instruction -- which particular field layout is used by this instruction. For example, the MIPS processors have R-type, I-type, J-type, FR-type, and FI-type instruction formats.[5] For example, the J1 processor has 3 instruction formats: Literal, Branch, and ALU.[6] For example, the Microchip PIC mid-range has 4 instruction formats: byte-oriented register operations, bit-oriented register operations, 8-bit literal operations, and branch instructions with an 11-bit literal.[7]

Occasionally some new CPU has a different instruction set formats from some other CPU, making it "not binary-compatible" with that other CPU. However, sometimes this new CPU can be designed to have "source code backward-compatibility" with some other CPU -- it is "assembly-language compatible but not binary-compatible" with programs written some other CPU. (Such as, for example, the 8080 which was source-compatible but not binary-compatible with the 8008, or the 8086 which was source-compatible but not binary-compatible with programs written for the 8085, the 8080, and the 8008 [citation needed]).

Further reading

- ↑ Walter Banks. "The Stack Controversy". 2009.

- ↑ Practically all modern CPUs maintain the illusion of a program counter sequentially walking through code one instruction at a time. However, a few complex modern CPUs internally execute several instructions simultaneously (superscalar), or execute instructions out-of-order, or even speculatively pre-execute instructions down the "wrong" path, then back up and take the right path. When designing and testing such internal structures, the concept of "the" PC is a bit fuzzy. Some processor architectures, for instance the CDP1802, do not have a single Program Counter; instead, one of the general purpose registers is used as a program counter, and which register that is can be changed under program control.

- ↑ a b Peter Kankowski. "x86 Machine Code Statistics"

- ↑ a b The evolution of RISC technology at IBM by John Cocke – IBM Journal of R&D, Volume 44, Numbers 1/2, p.48 (2000)

- ↑ MIPS Assembly/Instruction Formats

- ↑ Victor Yurkovsky. "HOMEBREW CPUS: MESSING AROUND WITH A J1"

- ↑ "PICmicro MID-RANGE MCU FAMILY: Section 29. Instruction Set" Section 29.2 Instruction Formats.

Memory

Memory is a fundamental aspect of microcontroller design, and a good understanding of memory is necessary to discuss a processor system.

Memory Hierarchy

Memory suffers from the dichotomy that it can be either large or it can be fast. As memory becomes more large, it becomes less fast, and vice-versa. Because of this trade-off, computer systems typically have a hierarchy of memory types, where faster (and smaller) memories are closer to the processor, and slower (but larger) memories are further from the processor.

Hard Disk Drives

Hard Disk Drives (HDD) and solid-state drives (SSD) are occasionally known as secondary memory or nonvolatile memory. HDD typically stores data magnetically (although some newer models use flash), and data is maintained even when the computer is turned off or removed from power. HDD is several orders of magnitude slower than all other memory devices, and a computer system will be more efficient when the number of interactions with the HDD are minimized.

Because most HDDs are mechanical and have moving parts, they tend to wear out and fail after time.

RAM

Random Access Memory (RAM), also known as main memory, is a volatile storage that holds data for the processor. Unlike HDD storage, RAM typically only has a capacity of a few gigabytes. There are two primary forms of RAM, and many variants on these.

SRAM

Static RAM (SRAM) is a type of memory storage that uses 6 transistors to store data. These transistors store data so long as power is supplied to the RAM and do not need to be refreshed.

SRAM is typically used in processor caches because of its faster speed, but not in main memory because it takes up more space.

DRAM

Dynamic RAM (DRAM) is a type of RAM that contains a single transistor and a capacitor. DRAM is smaller than SRAM, and therefore can store more data in a smaller area. Because of the charge and discharge times of the capacitor, however, DRAM tends to be slower than SRAM. Many modern types of Main Memory are based on DRAM design because of the high memory densities. Because DRAM is simpler than SRAM, it is typically cheaper to produce.

A popular type of RAM, SDRAM, is a variant of DRAM and is not related to SRAM.

As digital circuits continue to grow smaller and faster as per Moore's Law, the speed of DRAM is not increasing as rapidly. This means that as time goes on, the speed difference between the processor and the RAM units (so long as the RAM is based on DRAM or variants) will continue to increase, and communications between the two units becomes more inefficient.

Other RAM

Cache

Cache is memory that is smaller and faster than main memory and resides closer to the processor. RAM runs on the system bus clock, but Cache typically runs on the processor speed which can be 10 times faster or more. Cache is frequently divided into multiple levels: L1, L2, and L3, with L1 being the smallest and fastest, and L3 being the largest and slowest.

Registers

Registers are the smallest and fastest memory storage elements. A modern processor may have anywhere from 4 to 256 registers. We will discuss registers in much more detail in a later chapter, Microprocessor Design/Register File.

Control and Datapath

Most processors and other complicated hardware circuits are typically divided into two components: a datapath and a control unit. The datapath contains all the hardware necessary to perform all the necessary operations. In many cases, these hardware modules are parallel to one another, and the final result is determined by multiplexing all the partial results.

The control unit determines the operation of the datapath, by activating switches and passing control signals to the various multiplexers. In this way, the control unit can specify how the data flows through the datapath.

The width of the data path ...

There is only one mistake that can be made in a computer design that is difficult to recover from: not providing enough address bits for memory addressing and memory management.—Gordon Bell and Bill Strecker, 1975[1]

Almost any shortcoming in a computer architecture can be overcome except too small an address space; this is the reason that DEC was finally forced to design the VAX ("virtual address extension") as a replacement for the PDP-11.—Hollingsworth, Sachs, and Smith. 1987.[2]

For good code density, you want the ALU datapath width to be at least as wide as the address bus width. Then every time you need to increment an address, you can do it in a single instruction, rather than requiring multiple instructions to manipulate an address one piece at a time.[3][4]

After a person has designed the data path, that person finds all the control signal inputs to that datapath -- all the control signals that are needed to specify how data flows through that datapath.

- Each general-purpose register needs at least one control signal to control whether it maintains the current value or loads a new value from elsewhere.

- The ALU needs some control signals to tell it whether to add, subtract, etc.

- The program counter section needs control signals to tell it whether the program counter gets reloaded with an incremented version of the previous value, or with some completely different branch value.

- etc.

Once we know what control signals we need to generate, we need to design an Microprocessor Design/Instruction Decoder to generate those signals.

References

- ↑ Engineering Education "Today in History" PDP-11 minicomputer introduced by Gordon Bell 2009; referring to "What we learned from the PDP-11" by Gordon Bell and Bill Strecker, 1975. Early version of the PDP-11 had a 16-bit address space. ... also quoted in the book "Electronics"

- ↑ Hollingsworth, Sachs, and Smith. "The Fairchild Clipper: Instruction set architecture and processor implementation". Section 9.4: "Address Space Size". 1987.

- ↑ "It seems that the 16-bit ISA hits somehow the "sweet spot" for the best code density, perhaps because the addresses are also 16-bit wide and are handled in a single instruction. In contrast, 8-bitters need multiple instructions to handle 16-bit addresses." -- "Insects of the computer world" by Miro Samek 2009.

- ↑ "it just really sucks if the largest datum you can manipulate is smaller than your address size. This means that the accumulator needs to be the same size as the PC -- 16-bits." -- Allen "Opcode considerations"

Performance

| A Wikibookian suggests that Microprocessor Design/Performance Metrics be merged into this chapter because: Discuss whether or not this merger should happen on the discussion page. |

Clock Cycles

The clock signal is a 1-bit signal that oscillates between a "1" and a "0" with a certain frequency. When the clock transitions from a "0" to a "1" it is called the positive edge, and when the clock transitions from a "1" to a "0" it is called the negative edge.

The time it takes to go from one positive edge to the next positive edge is known as the clock period, and represents one clock cycle.

The number of clock cycles that can fit in 1 second is called the clock frequency. To get the clock frequency, we can use the following formula:

Clock frequency is measured in units of cycles per second.

Cycles per Instruction

In many microprocessor designs, it is common for multiple clock cycles to transpire while performing a single instruction. For this reason, it is frequently useful to keep a count of how many cycles are required to perform a single instruction. This number is known as the cycles per instruction, or CPI of the processor.

Because all processors may operate using a different CPI, it is not possible to accurately compare multiple processors simply by comparing the clock frequencies. It is more useful to compare the number of instructions per second, which can be calculated as such:

One of the most common units of measure in modern processors is the "MIPS", which stands for millions of instructions per second. A processor with 5 MIPS can perform 5 million instructions every second. Another common metric is "FLOPS", which stands for floating point operations per second. MFLOPS is a million FLOPS, GFLOPS is a billion FLOPS, and TFLOPS is a trillion FLOPS.

Instruction count

The "instruction count" in microprocessor performance measurement is the number of instructions executed during the run of a program. Typical benchmark programs have instruction counts in the millions or billions -- even though the program itself may be very short, those benchmarks have inner loops that are repeated millions of times.

Some microprocessor designers have the freedom to add instructions to or remove instructions from the instruction set. Typically the only way to reduce the instruction count is to add instructions such that those inner loops can be re-written in a way that does the necessary work using fewer instructions -- those instructions do "more work" per instruction.

Sometimes, counter-intuitively, we can improve overall CPU performance (i.e., reduce CPU time) in a way that increases the instruction count, by using instructions in that inner loop that may do "less work" per instruction, but those instructions finish in less time.

CPU Time

CPU Time is the amount of time it takes the CPU to complete a particular program. CPU time is a function of the amount of time it takes to complete instructions, and the number of instructions in the program:

Sometimes we can improve one of the 3 components alone, reducing CPU time. But quite often we find a tradeoff -- say, a technique that increases instruction count, but reduces the clock cycle time -- and we have to measure the total CPU time to see if that technique makes the overall performance better or worse.

Performance

Amdahls Law

Amdahl's Law is a law concerned with computer performance and optimization. Amdahl's law states that an improvement in the speed of a single processor component will have a comparatively small effect on the performance of the overall processor unit.

In the most general sense, Amdahl's Law can be stated mathematically as follows:

where:

- Δ is the factor by which the program is sped up or slowed down,

- Pk is a percentage of the instructions that can be improved (or slowed),

- Sk is the speed-up multiplier (where 1 is no speed-up and no slowing),

- k represents a label for each different percentage and speed-up, and

- n is the number of different speed-up/slow-downs resulting from the system change.

For instance, if we make a speed improvement in the memory module, only the instructions that deal directly with the memory module will experience a speedup. In this case, the percentage of load and store instructions in our program will be P0, and the factor by which those instructions are sped up will be S0. All other instructions, which are not affected by the memory unit will be P1, and the speed up will be S1 Where:

We set S1 to 1 because those instructions are not sped up or slowed down by the change to the memory unit.

Benchmarking

- SpecInt

- SpecFP

- "Maxim/Dallas APPLICATION NOTE 3593" benchmarking

- "Mod51 Benchmarks"

- EEMBC, the Embedded Microprocessor Benchmark Consortium

Assembly Language

Assemblers

Assemblers take in human-readable assembly code and produce machine code.

Assembly Language Constructs

There are a number of different assembly languages in existence, but all of them have a few things in common. They all map directly to the underlying hardware CPU instruction sets.

- CPU instruction set

- is a set of binary code/instruction that the CPU understands. Based on the CPU, the instruction can be one byte, two bytes or longer. The instruction code is usually followed by one or two operands.

| Instruction Code | operand 1 | operand 2 |

How many instructions there are depends on the CPU.

Because binary code is difficult to remember, each instruction has as its name a so-called mnemonic. For example 'MOV' can be used for moving instructions.

MOV A, 0x0020

The above instruction moves the value of register A to the specified address.

A simple assembler will translate the 'MOV A' to its CPU's instruction code.

Assembly languages cannot be assumed to be directly portable to other CPU's. Each CPU has its own assembly language, though CPU's within the same family may support limited portability

Load and Store

These instructions tell the CPU to move data from memory to a CPU's register, or move data from one of the CPU's register to memory.

- register

- is a special memory located inside the CPU, where arithmetic operations can be performed.

Arithmetic

Arithmetic operations can be performed using the CPU's registers:

- Increment the value of one of the CPU's registers

- Decrement the value of one of the CPU's registers

- Add a value to the register

- Subtract value from the register

- Multiply the register value

- Divide the register value

- Shift the register value

- Rotate the register value

Jumping

During a jump instruction, the program counter is loaded with a new address that is not necessarily the address of the next sequential instruction. After a jump, the program execution continues from the new location in memory.

- Relative jump

- the instruction's operand tells how many bytes the program counter should be increased or decreased.

- Absolute jump

- the instruction's operand is copied to the program counter; the operand is an absolute memory address where the execution should continue.

Branching

During a branch, the program counter is loaded with one of multiple new values, depending on some specified condition. A branch is a series of conditional jumps.

Some CPUs have skipping instructions. If a register is zero, the following instruction is skipped, if not then the following instruction is executed, which can be a jumping instruction. So Branching can be done by using skipping and jumping instructions together.

Further reading

Design Steps

When designing a new microprocessor or microcontroller unit, there are a few general steps that can be followed to make the process flow more logically. These few steps can be further sub-divided into smaller tasks that can be tackled more easily. The general steps to designing a new microprocessor are:

- Determine the capabilities the new processor should have.

- Lay out the datapath to handle the necessary capabilities.

- Define the machine code instruction format (ISA).

- Construct the necessary logic to control the datapath.

We will discuss each of these steps below:

Determine Machine Capabilities

Before you start to design a new processor element, it is important to first ask why you are designing it at all. What new thing will your processor do that existing processors cannot? Keep in mind that it is always less expensive to utilize an existing chip than to design and manufacture a new one.

Some questions to start:

- Is this chip an embedded chip, a general-purpose chip, or a different type entirely?

- What, if any, are the limitations in terms of resources, price, power, or speed?

With that in mind, we need to ask what our chip will do:

- Does it have integer, floating-point, or fixed point arithmetic, or a combination of all three?

- Does it have scalar or vector operation abilities?

- Is it self-contained, or must it interface with a number of external peripherals?

- Will it support interrupts? If so, How much interrupt latency is tolerable? How much interrupt-response jitter is tolerable?

We also need to ask ourselves whether the machine will support a wide array of instructions, or if it will have a limited set of instructions. More instructions make the design more difficult, but make programming and using the chip easier. On the other hand, having fewer instructions is easier to design, but can be harder and more costly to program.

Lay out the basic arithmetic operations you want your chip to have:

- Addition/Subtraction

- Multiplication

- Division

- Shifting and Rotating

- Logical Operations: AND, OR, XOR, NOR, NOT, etc.

List other capabilities that your machine has:

- Unconditional jumps

- Conditional Jumps (and what conditions?)

- Stack operations (Push, pop)

Once we know what our chip is supposed to do, it is easier to lay out the framework for our datapath

Design the Datapath

Right off the bat we need to determine what ALU architecture that our processor will use:

- Accumulator

- Stack

- Register

- A combination of the above 3

This decision, more than any other, is going to have the largest effect on your final design. Do not proceed in the design process until you have made this decision. Once you have your ALU architecture, you create your memory element (stack or register file), and you can lay out your ALU.

Create ISA

Once we have our basic datapath, we can start to design our ISA. There are a few things that we need to consider:

- Is this processor RISC, CISC, or VLIW?

- How long is a machine word?

- How do you deal with immediate values? What kinds of instructions can accept immediate values?

Once we have our machine code basics, we frequently need to determine whether our processor will be compatible with higher-level languages. Specifically, are there any instructions that can be used for function call and return?

Determining the length of the instruction word in a RISC is a very important matter, and one that is worth a considerable amount of thought. For additional flexibility you can utilize a variable-length instruction set instead — like most CISC machines — at the expense of additional—and more complicated—instruction decode logic. If the instruction word is too long, programmers will be able to fit fewer instructions into memory. If the instruction word is too small, there will not be enough room for all the necessary information. On a desktop PC with several megabytes or even gigabytes of RAM, large instruction words are not a big problem. On an embedded system however, with limited program ROM, the length of the instruction word will have a direct effect on the size of potential programs, and the usefulness of the chips.

Each instruction should have an associated opcode, and typically the length of the opcode field should be constant for all instructions, to reduce complexity of the decoder. The length of the opcode field will directly impact the number of distinct instructions that can be implemented. if the opcode field is too small, you won't have enough room to designate all your instructions. If your opcode is too large, you will be wasting precious bits in your instruction word.

Some instructions will need to be larger than others. For instance, instructions that deal with an immediate value, a memory location, or a jump address are typically larger than instructions that only deal with registers. Instructions that deal only with registers, therefore, will have additional space left over that can be used as an extension to the opcode field.

Example: MIPS R-Type

In the MIPS architecture, instructions that only deal with registers are called R type instructions. With 32 registers, a register address is only 5 bits wide. The MIPS opcode is 6 bits wide. With the opcode and the three register addresses (two source and 1 destination register), an R-type instruction only uses 21 out of the 32 bits available.

The additional 11 bits are broken into two additional fields: Shamt, a 5 bit immediate value that controls the amount of places shifted by a shift or rotate instruction, and Func. Func is a 6 bit field that contains additional information about R-Type instructions. Because of the availability of the Func field, all R-Type instructions share an opcode of 0.

Instruction set design

Picking a particular set of instructions is often more an art than a science.

Historically there have been different perspectives on what makes a "good" instruction set.

- The early CISC years focused on making instruction sets that expert assembly language programmers enjoyed programming -- " code density" was a common metric.

- the early RISC years focused on making instruction sets that ran a few benchmark programs in C, when compiled with relatively primitive compilers, really, really fast -- "cycles per instruction", and later "instructions per cycle" was recognized as an important part of achieving low "time to run the benchmark".

- The rise of multitasking operating systems (and shared-memory parallel processors) lead to the discovery of non-blocking synchronization and the instructions necessary to support it.

- CPUs dedicated to a single application (ASICs or FPGAs) led to the idea of customizing the CPU for one particular application[1]

- The rise of viruses and other malware led to the recognition of the Popek and Goldberg virtualization requirements.

Build Control Logic

Once we have our datapath and our ISA, we can start to construct the logic of our primary control unit. These units are typically implemented as a finite state machine, and we can try to map the ISA to the control unit in a logical way.

We go into much more detail on control unit design in the following sections, Microprocessor Design/Control and Datapath and Microprocessor Design/Instruction Decoder.

Design the Address Path

If a simple virtual==physical address path is adequate for your CPU, you can skip this section.

Most processors have a very simple address path -- address bits come from the PC or some other programmer-visible register, or directly from some instruction, and they are directly applied to the address bus.

Many general-purpose processors have a more complex address path: user-level programs run as if they have a simple address path, but the physical address applied to the address bus is significantly different than the programmer-visible address. This enables virtual memory, memory protection, and other desirable features.

We talk more about the benefits and drawbacks of an MMU, and how to implement it, in

Verify the design

People who design a CPU often spend more time on functional verification than all other steps combined.

Further reading

- Kong and Patterson. "Instruction set design". 1995.[11]

References

- ↑ "Generating instruction sets and microarchitectures from applications" by Ing-Jer Huang, and Alvin M. Despain

Microprocessor Components

Basic Components

Basic Components

There are a number of components in a common microprocessor that designers should be familiar with before attempting a design. For an overview of these components, see Digital Circuits.

Registers

A register is a storage element typically composed of an array of smaller, 1-bit storage elements called flip-flops. The number of bits that the register is composed of equals the number of bits that the register can store at any given time. For example, a 1-bit register can store 1 bit, and a 32-bit register can hold 32 bits, etc. Registers can be any length.

A register has two inputs, a data input and a clock input. The clock input is typically called the "enable". When the enable signal is high, the register stores the data input. When the clock signal is low, the register value stays the same.

Register File

A register file is a whole collection of registers, typically all of which are the same length. A register file takes three inputs, an index address value, a data value, and an enable signal. A signal decoder is used to pass the data value from the register file input to the particular register with the specified address. We talk more about register files in a later section of this book, Register File.

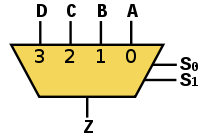

Multiplexers

A multiplexer is an input selector. A multiplexer has an output, a control input, and several data inputs. For ease, we number multiplexer inputs from zero, at the top. If the control signal is "0", the 0th input is moved to the output. If the control signal is "3", the 3rd input is moved to the output.

A multiplexer with N control signal bits can support 2N inputs. For example, a multiplexer with 3 control signals can support 23 = 8 inputs.

Multiplexers are typically abbreviated as "MUX", and will be abbreviated as such throughout the rest of this book.

|

|

| A 4 input Multiplexer with 2 control signal wires | An 8 input Multiplexer with 3 control signal wires |

|---|---|

| |

| A 16 input Multiplexer with 4 control wires | |

There can be decoders implemented in the components.

Decoder ( inverse functionality of Encoder) can have multiple inputs and depending upon the inputs one of the output signals can go high.

For a 2 input decoder there will be 4 output signals.

/|- O0

i0---| |- O1

i1---| |- O2

\|- O3

suppose input i is having value 00 then output signal O0 will go high and remaining other three lines O1 to O3 will be low. In same fashion if i is having value 2 then output O2 will be high and remaining other three lines will be low.

Adder

Program Counter

The Program Counter (PC) is a register structure that contains the address pointer value of the current instruction. Each cycle, the value at the pointer is read into the instruction decoder and the program counter is updated to point to the next instruction. For RISC computers updating the PC register is as simple as adding the machine word length (in bytes) to the PC. In a CISC machine, however, the length of the current instruction needs to be calculated, and that length value needs to be added to the PC.

Updating the PC

The PC can be updated by making the enable signal high. After each instruction cycle the PC needs to be updated to point to the next instruction in memory. It is important to know how the memory is arranged before constructing your PC update circuit.

Harvard-based systems tend to store one machine word per memory location. This means that every cycle the PC needs to be incremented by 1. Computers that share data and instruction memory together typically are byte addressable, which is to say that each byte has its own address, as opposed to each machine word having its own address. In these situations, the PC needs to be incremented by the number of bytes in the machine word.

In this image, the letter M is being used as the amount by which to update the PC each cycle. This might be a variable in the case of a CISC machine.

Example: MIPS

The MIPS architecture uses a byte-addressable instruction memory unit. MIPS is a RISC computer, and that means that all the instructions are the same length: 32-bits. Every cycle, therefore, the PC needs to be incremented by 4 (32 bits = 4 bytes).

Example: Intel IA32

The Intel IA32 (better known by some as "x86") is a CISC architecture, which means that each instruction can be a different length. The Intel memory is byte-addressable. Each cycle the instruction decoder needs to determine the length of the instruction, in bytes, and it needs to output that value to the PC. The PC unit increments itself by the value received from the instruction decoder.

Branching

Branching occurs at one of a set of special instructions known collectively as "branch" or "jump" instructions

. In a branch or a jump, control is moved to a different instruction at a different location in instruction memory.

During a branch, a new address for the PC is loaded, typically from the instruction or from a register. This new value is loaded into the PC, and future instructions are loaded from that location.

Non-Offset Branching

A non-offset branch, frequently referred to as a "jump" is a branch where the previous PC value is discarded and a new PC value is loaded from an external source.

In this image, the PC value is either loaded with an updated version of itself, or else it is loaded with a new Branch Address. For simplification we do not show the control signals to the MUX.

Offset Branching

An offset branch is a branch where a value is added (or subtracted) to the current PC value to produce the new value. This is typically used in systems where the PC value is larger then a register value or an immediate value, and it is not possible to load a complete value into the PC. It is also commonly used to support relocatable binaries which may be loaded at an arbitrary base address.

In this image there is a second ALU unit. Notice that we could simplify this circuit and remove the second ALU unit if we use the configuration below:

These are just two possible configurations for this circuit.

Offset and Non-Offset Branching

Many systems have capabilities to use both offset and non-offset branching. Some systems may differentiate between the two as "near jump" and "far jump" respectively, although this terminology is archaic.

Instruction Decoder

The Instruction Decoder reads the next instruction in from memory, and sends the component pieces of that instruction to the necessary destinations.

For each machine-language instruction, the control unit produces the sequence of pulses on each control signal line required to implement that instruction (and to fetch the next instruction).

If you are lucky, when you design a processor you will find that many of those control signals can be "directly decoded" from the instruction register. For example, sometimes a few output bits from the instruction register IR can be directly wired to the "which function" inputs of the ALU. Even if those bits mean something completely unrelated in non-ALU instructions, it's OK if the ALU performs, say, a bogus SUBTRACT, while the rest of the processor is executing a STORE instruction.

The remaining control signals that cannot be decoded from the instruction register -- if you are unlucky, all the control signals -- are generated by the control unit, which is implemented as a [Moore machine][2] or a [Mealy machine][3]. There are many different ways to implement the control unit.